ComfyUI

Node-based Stable Diffusion interface that breaks image generation into visible, rearrangeable workflow nodes. The r/StableDiffusion community (750,000+ members) treats ComfyUI as the standard for advanced AI art work, praising its flexibility and performance while noting the steep learning curve for beginners.

Key Features:

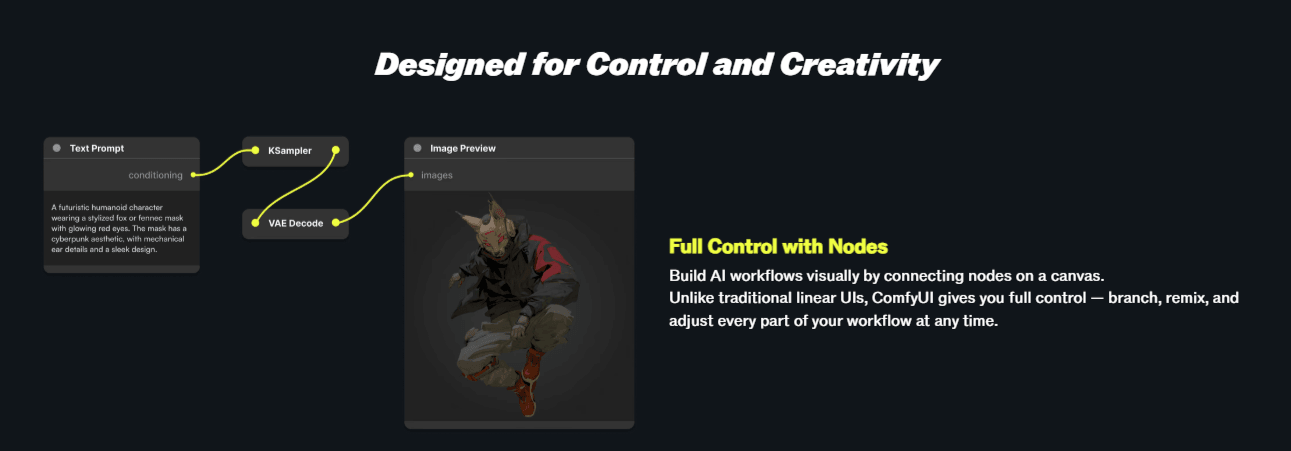

- ✓Node-based workflow editor: every generation step is visible and editable

- ✓Supports all major model types: SDXL, FLUX, SD 1.5, ControlNet, AnimateDiff

- ✓ComfyUI Manager for one-click custom node installation

- ✓JSON workflow export and import for sharing exact setups

- ✓Faster than AUTOMATIC1111 for the same tasks per community benchmarks

Pricing:

Free (open source)

Pros:

- + Complete control over every generation parameter per r/StableDiffusion

- + Faster performance than AUTOMATIC1111 on the same hardware

- + JSON workflows are shareable and reproducible across setups

- + FLUX model support is better than AUTOMATIC1111 per community reports

Cons:

- - Steeper learning curve than AUTOMATIC1111 or Forge for beginners

- - Node interface overwhelming without prior workflow familiarity

- - Error messages less beginner-friendly than alternative interfaces

Best For:

AI artists who want precise control over every aspect of image generation and are willing to invest time learning the node-based workflow system.