Cloud GPU Providers Compared: Pricing, Speed, and Which to Use in 2026

Key Numbers

Key Takeaways

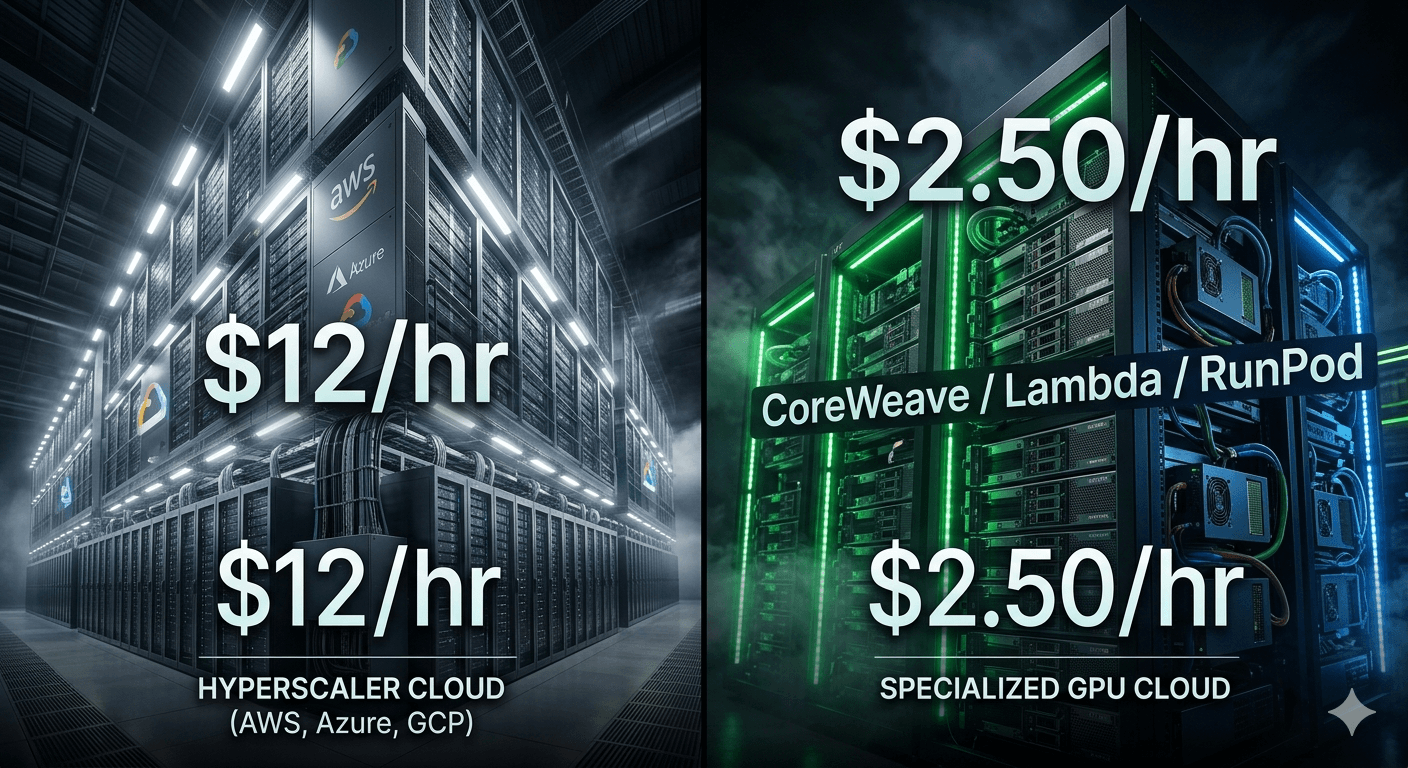

- 1Cloud GPU providers rent access to NVIDIA H100, A100, and B200 GPUs over the internet. Hyperscalers (AWS, Azure, Google Cloud) charge 3-6x more per GPU-hour than specialized providers like CoreWeave, Lambda Labs, and RunPod for equivalent hardware.

- 2AWS charges $6.88/hr for a single on-demand H100; Azure charges $12.29/hr. Specialized providers charge $2-3/hr for the same GPU. Running 100 H100s at AWS 24/7 costs roughly $6M per year. The same cluster at a specialized provider costs $1.7-2.6M.

- 3CoreWeave went public on March 28, 2025 at $40/share with a $35B valuation and reported $5B in annual revenue for 2025. NVIDIA owns 6% of CoreWeave, reflecting how tightly GPU supply and cloud infrastructure are now connected.

Cloud GPU providers give developers, researchers, and companies access to NVIDIA H100s, A100s, and B200s without buying the hardware. The key fact most comparisons skip: there are two distinct markets operating at very different price points. Hyperscalers (AWS, Azure, Google Cloud) charge $6-12 per GPU-hour for on-demand H100 access. Specialized GPU clouds (CoreWeave, Lambda Labs, RunPod) charge $2-3 per GPU-hour for the same hardware.

That gap is not a sale or a promotion. It reflects the structural difference between what hyperscalers are and what specialized GPU clouds are. AWS bundles GPU compute with its global network, storage, compliance infrastructure, and enterprise support. Specialized providers strip all of that away and offer raw GPU access at lower rates, often on bare-metal machines with InfiniBand interconnects optimized for distributed AI training.

After reading this guide, you will understand the real pricing landscape across six major providers, why hyperscalers cost more, what CoreWeave's rise signals about the GPU cloud market, and which provider type is right for training versus inference workloads.

In This Article

- 1What Is a Cloud GPU Provider?

- 2The Major Cloud GPU Providers in 2026

- 3GPU Pricing Comparison Across Providers (Q1 2026)

- 4What You Actually Pay at Scale

- 5Hyperscalers vs. Specialized Providers: The Real Trade-offs

- 6CoreWeave and Why the GPU Cloud Market Is Shifting

- 7How to Choose the Right Cloud GPU Provider for Your Workload

What Is a Cloud GPU Provider?

A cloud GPU provider rents access to GPU compute over the internet, billed by the hour or second, without requiring users to buy, install, or maintain physical hardware. Users connect via API or SSH, run their workloads, and pay only for what they use.

There are two distinct categories in this market, and confusing them leads to poor purchasing decisions.

Hyperscalers are companies whose primary business is general-purpose cloud computing: AWS, Microsoft Azure, and Google Cloud. They offer GPU instances as one product among hundreds. Their GPU offerings come with the full hyperscaler stack: global CDN, managed Kubernetes, compliance certifications, enterprise SLAs, and deep integration with their own storage and networking services. That breadth is the reason they cost more.

Specialized GPU clouds (also called GPU-as-a-Service providers or neoclouds) exist specifically to provide GPU compute. CoreWeave, Lambda Labs, RunPod, and Vast.ai have no general-purpose cloud services. Their entire infrastructure is designed around dense GPU clusters, high-bandwidth InfiniBand networking, and fast NVMe storage. They are cheaper for raw GPU work because they have stripped away everything that is not a GPU.

| Category | Examples | H100 On-Demand Price | Best For |

|---|---|---|---|

| Hyperscaler | AWS, Azure, Google Cloud | $6-12/hr per GPU | Enterprise, compliance-heavy, multi-service workloads |

| Specialized GPU cloud | CoreWeave, Lambda Labs, RunPod | $2-3/hr per GPU | AI training, inference, research, cost-sensitive teams |

| Spot or community market | Vast.ai, RunPod community cloud | $0.50-1.50/hr | Experiments, non-critical batch jobs |

The third category, community or spot markets, is worth noting. Vast.ai and RunPod's community cloud allow individual hardware owners to rent out spare GPU capacity. Prices can be extremely low, but availability is inconsistent and there are no uptime guarantees.

The Major Cloud GPU Providers in 2026

Six providers dominate the cloud GPU market in 2026. Here is a factual breakdown of each.

| Provider | Type | GPU Tiers Available | Key Differentiator |

|---|---|---|---|

| AWS | Hyperscaler | H100, A100, B200, L40S | Deepest ecosystem; p4, p5, p6 instance families |

| Azure | Hyperscaler | H100 (ND H100 v5 series) | Microsoft and OpenAI partnership; HBv3/HBv4 series |

| Google Cloud | Hyperscaler | A100, H100, TPU v5 | TPU access; per-second billing; best for TensorFlow/JAX |

| CoreWeave | Specialized | H100, H200, A100, B200 | Bare-metal InfiniBand clusters; Kubernetes-native; NVIDIA-backed |

| Lambda Labs | Specialized | H100, A100, B200 | Researcher-friendly reserved instances at competitive rates |

| RunPod | Specialized and community | H100, A100, B200, RTX 4090 | Secure cloud and community cloud tiers; widest GPU variety |

AWS

AWS is the largest cloud provider overall, with GPU instances across the p4de series (A100 80GB), p5 series (H100), and the newer p6 series (B200). On-demand H100 pricing via AWS p5 instances runs approximately $6.88/hr per GPU as of Q1 2026 (Spheron Network analysis). Reserved instances at 1- or 3-year commitments reduce that substantially for teams with predictable workloads.

Azure

Azure's ND H100 v5 series carries the highest on-demand H100 price among hyperscalers at approximately $12.29/hr per GPU as of Q1 2026 (Spheron Network analysis). Azure has a structural advantage in AI: Microsoft's partnership with OpenAI makes Azure the infrastructure backbone for ChatGPT and the OpenAI API. For organizations already in the Microsoft ecosystem, that integration can justify the premium.

Google Cloud

Google Cloud offers A100 instances (A2 series) at approximately $5.78/hr per GPU as of Q1 2026 (Spheron Network analysis). It is the only hyperscaler with proprietary AI accelerators: the Tensor Processing Unit, purpose-built for TensorFlow and JAX workloads. Google also offers per-second billing and committed use discounts that reduce costs for predictable workloads.

CoreWeave

CoreWeave is the most significant new entrant in cloud compute in a generation. It went public on March 28, 2025 at $40 per share, with a valuation of approximately $35 billion. The company reported $1.9 billion in revenue for 2024 (a 737% year-over-year increase) and $5 billion for full-year 2025 (CoreWeave investor relations, 2026). NVIDIA owns approximately 6% of CoreWeave, reflecting a strategic alignment: CoreWeave is one of the primary distributors of NVIDIA H100 and H200 capacity to AI developers.

CoreWeave's infrastructure is built around bare-metal GPU clusters with InfiniBand networking, specifically suited to the large-scale distributed training workloads covered in the AI training vs. inference cost guide.

Lambda Labs

Lambda Labs focuses on the AI research and developer segment. It offers H100 and A100 reserved instances at rates competitive with CoreWeave, and its B200 instances start at approximately $4.99-5.29/hr (2026 pricing). Lambda's dashboard is simpler than AWS or Azure, making it a common first choice for teams migrating off consumer-grade infrastructure.

RunPod

RunPod operates two tiers: Secure Cloud (dedicated data center infrastructure) and Community Cloud (spare capacity from individual GPU owners). Secure Cloud B200 instances start at $4.99/hr. Community Cloud H100s can be as low as $2/hr with spot-style pricing. RunPod is popular for inference deployments and smaller training runs because it offers the widest GPU variety of any provider, including RTX 4090s at under $1/hr for cost-sensitive inference tasks.

GPU Pricing Comparison Across Providers (Q1 2026)

This table shows on-demand GPU pricing across the major providers as of Q1 2026. All figures are per GPU per hour, on-demand, with no commitment discounts applied.

| Provider | H100 SXM (80GB) | A100 (80GB) | B200 | Notes |

|---|---|---|---|---|

| AWS | $6.88 | $3.43 | $14.24 | p5/p4de/p6 series |

| Azure | $12.29 | — | — | ND H100 v5 series |

| Google Cloud | — | $5.78 | — | A2 series; H100 available |

| CoreWeave | $2.50-3.00 | $1.80-2.20 | $5.00-6.00 | Contract-based pricing |

| Lambda Labs | $2.49 | $1.99 | $4.99-5.29 | Reserved and on-demand |

| RunPod (Secure) | $2.49 | $1.90 | $4.99 | Secure Cloud tier |

| Spheron | $2.01 | $1.07 | $6.03 | Spot pricing available |

Sources: Spheron Network pricing analysis Q1 2026; Lambda Labs and RunPod public pricing pages; AWS p5 and p4de instance pricing.

Three important context points for this table:

On-demand versus reserved: The hyperscaler prices shown are on-demand, the most expensive tier. AWS Reserved Instances with a 1-year commitment typically reduce GPU costs by 30-40%, bringing H100 costs closer to $4.10-4.50/hr. That narrows the gap with specialized providers considerably, but does not close it.

Spot pricing: AWS spot instances and RunPod community cloud prices can be 40-70% below on-demand rates. Spot instances are preemptible. AWS can reclaim spot capacity with a 2-minute warning. This is acceptable for checkpoint-aware training jobs and not acceptable for production inference serving.

Networking costs: Hyperscalers charge data egress fees of $0.08-0.09/GB for data leaving their clouds. Specialized providers often charge lower or zero egress fees. For training jobs that pull large datasets from external storage, this difference can add materially to the total bill.

"Hyperscalers charge 3 to 6 times more for equivalent GPU compute compared to specialized AI cloud providers." (Spheron Network pricing analysis, Q1 2026)

What You Actually Pay at Scale

The hourly price difference looks manageable for a single GPU. At cluster scale, it compounds rapidly.

The Number Most Guides Don't Show

Consider a team running 100 H100 GPUs continuously for AI training: a modestly sized production setup, not a frontier model run.

At AWS on-demand ($6.88/hr): 100 GPUs x $6.88 x 8,760 hours/year = **$6,027,072/year**

At a specialized provider ($2.50/hr average): 100 GPUs x $2.50 x 8,760 hours/year = **$2,190,000/year**

The annual price gap is $3.84 million for the exact same GPU hardware. That is enough to hire several ML engineers, fund a separate inference cluster, or cover a year of experimentation budget.

At Azure's H100 rate ($12.29/hr), the same 100-GPU cluster costs $10,766,040/year, nearly 5x what a specialized provider charges. This is not a hypothetical. It is why companies like Mistral AI and dozens of AI labs run their training infrastructure on CoreWeave or Lambda Labs rather than AWS, even when their customer-facing products are hosted on AWS or Azure for compliance and ecosystem reasons.

Reserved vs. On-Demand: When Commitments Make Sense

AWS, Azure, and Google Cloud all offer significant discounts for 1- or 3-year reserved instances.

| Commitment | AWS H100 (estimated) | Specialized Provider | Premium |

|---|---|---|---|

| On-demand | $6.88/hr | $2.50/hr | 2.75x |

| 1-year reserved | ~$4.20/hr | ~$2.00/hr | 2.1x |

| 3-year reserved | ~$3.00/hr | ~$1.60/hr | 1.9x |

Even at maximum commitment, hyperscalers remain roughly 2x more expensive for raw GPU compute. The premium buys something real: global infrastructure, compliance certifications, enterprise support, and deep service integration. For teams that need those things, the premium is rational. For teams that only need GPUs, it is not.

The NVIDIA H100 specs and pricing guide covers the hardware cost structure in detail, including why H100 pricing is what it is at the chip level.

Hyperscalers vs. Specialized Providers: The Real Trade-offs

Choosing between a hyperscaler and a specialized GPU cloud is not purely a price decision. It involves real architectural trade-offs.

Where Hyperscalers Win

Compliance and certifications: AWS, Azure, and Google Cloud hold SOC 2, HIPAA, ISO 27001, and FedRAMP certifications. For healthcare AI, financial services, and government contracts, these certifications are often non-negotiable. Most specialized providers hold SOC 2 but not the full stack.

Service integration: If your training pipeline pulls data from S3, or your inference serving needs to integrate with Azure Active Directory, staying within a hyperscaler's ecosystem reduces latency and simplifies architecture. Moving data across cloud providers adds cost and complexity.

Managed services: AWS SageMaker, Azure Machine Learning, and Google Vertex AI provide end-to-end ML platforms with experiment tracking, model registries, and deployment pipelines. Building equivalent pipelines on a specialized GPU cloud requires more engineering work.

Global footprint: AWS operates 33 geographic regions; Azure operates 60+. Specialized providers typically operate from 3-10 data center locations. For latency-sensitive inference serving to global users, geographic distribution matters.

Where Specialized Providers Win

Raw GPU performance per dollar: Bare-metal access, InfiniBand networking between nodes, and NVMe storage are standard on CoreWeave and Lambda Labs. AWS virtualizes its GPU instances, which adds overhead and reduces effective GPU utilization for distributed training workloads.

GPU availability: During the H100 shortage of 2023-2024, CoreWeave and Lambda Labs had better availability than AWS and Azure because they secured direct NVIDIA allocations early. Specialized providers typically have longer-standing GPU procurement relationships.

Simpler pricing: AWS GPU instance pricing involves multiple dimensions: instance type, region, tenancy, storage type, and data transfer. Specialized providers charge by GPU-hour with minimal add-on fees.

Faster provisioning: Specialized providers provision GPU instances in minutes with no account approval delays. AWS GPU instances above certain quota thresholds require service limit increase requests that can take days.

| Factor | Hyperscalers | Specialized Providers |

|---|---|---|

| H100 on-demand price | $6-12/hr | $2-3/hr |

| Compliance certifications | Full stack (HIPAA, FedRAMP) | SOC 2 at minimum |

| Bare-metal access | Virtualized | Bare-metal available |

| InfiniBand networking | Select instance types | Standard on clusters |

| Geographic regions | 30-60+ | 3-10 |

| Managed ML services | Yes | No or limited |

| Setup complexity | High | Lower |

CoreWeave and Why the GPU Cloud Market Is Shifting

CoreWeave's trajectory explains more about the GPU cloud market than any other data point.

The company was founded in 2017 as a cryptocurrency mining operation and pivoted to GPU cloud computing in 2019, betting that AI compute demand would grow faster than general cloud services. That bet proved correct. CoreWeave went public on March 28, 2025 at $40 per share with a market valuation of approximately $35 billion.

The revenue growth is striking. CoreWeave reported $1.9 billion in revenue for full-year 2024, a 737% increase year-over-year. By the end of 2025, revenue had grown to $5 billion annually. CEO Michael Intrator described this as "the fastest any cloud has reached $5 billion in annual revenue" (CoreWeave investor relations, 2026). The company also disclosed $15.1 billion in remaining performance obligations at the end of 2024, representing contracted future revenue with an average contract duration of four years.

"CoreWeave achieved $5 billion in annual revenue for 2025, the fastest any cloud has reached that milestone." (Michael Intrator, CoreWeave CEO, CoreWeave investor relations, 2026)

NVIDIA's 6% equity stake in CoreWeave is worth examining. NVIDIA is not simply a supplier to CoreWeave. It is a strategic partner. CoreWeave was among the first cloud providers to deploy H100s at scale and has continued to receive early allocations of H200s and GB200s (Blackwell generation). This relationship means CoreWeave consistently has hardware availability that hyperscalers, which compete through NVIDIA's standard allocation process, sometimes cannot match.

The broader implication: AI training workloads are migrating from general-purpose hyperscaler infrastructure toward purpose-built GPU clouds. Hyperscalers are responding with investments in GPU-optimized instances, but specialized providers built GPU-first architecture from day one. That structural head start shows in both pricing and distributed training performance benchmarks.

How to Choose the Right Cloud GPU Provider for Your Workload

The right provider depends on what you are building, how long you are running, and what compliance requirements you operate under.

For AI training at scale (multi-GPU, multi-node)

Use CoreWeave or Lambda Labs for training runs above 8 GPUs. Both offer bare-metal H100 or H200 clusters with InfiniBand networking, which is critical for distributed training. Without InfiniBand, multi-node training throughput drops dramatically. The AI training vs. inference cost guide covers why the interconnect matters as much as the GPU itself.

For training on hyperscalers, use AWS p5 with EFA (Elastic Fabric Adapter) or Azure ND H100 v5, which are the specific instance types that include proper high-bandwidth interconnects for multi-node training. Standard GPU instances do not.

For inference and serving

Inference does not require InfiniBand. Single-GPU inference is the primary use case for RunPod community cloud, which offers H100s at around $2/hr and RTX 4090s at under $1/hr. For production inference with uptime requirements, use RunPod Secure Cloud or Lambda Labs.

For inference at very high request volumes, Google Cloud has a cost advantage through per-second billing, which eliminates idle GPU costs that accumulate with hourly billing on other platforms.

For experiments and prototyping

Vast.ai and RunPod community cloud are the cheapest options for non-critical workloads. Expect occasional interruptions, but at $0.50-1.00/hr for an A100, the economics of throwaway experiments are compelling.

For regulated industries

Use AWS, Azure, or Google Cloud. Full compliance certification stacks are not optional for healthcare, finance, and government deployments. The premium is largely a compliance cost.

Questions to ask before committing

1. What GPU models do you need, and are they available at this provider right now? 2. Does your workload require multi-node InfiniBand, or is single-node GPU sufficient? 3. What are the data egress costs if you pull training data from external storage? 4. Does your organization need SOC 2, HIPAA, or FedRAMP certification? 5. Is on-demand access acceptable, or do you need guaranteed reserved capacity?

GPU hourly rate is one variable. Storage I/O speed, network bandwidth between nodes, and data egress pricing all affect the total cost of a real workload. For a 100-GPU training run that pulls 10 TB of training data, egress costs alone add $800-900 to the AWS total bill at $0.09/GB. That cost does not appear on most specialized provider invoices.

Frequently Asked Questions

What is the cheapest cloud GPU provider for H100s in 2026?

Spheron and specialized providers offer H100s at approximately $2.01/hr on-demand as of Q1 2026. Lambda Labs and RunPod Secure Cloud are in the $2.49-2.50/hr range. CoreWeave prices vary by contract but are typically $2.50-3.00/hr for on-demand H100 access. These compare to AWS at $6.88/hr and Azure at $12.29/hr for the same GPU on-demand.

Why is Azure more expensive than AWS for GPU compute?

Azure's ND H100 v5 instances are priced at approximately $12.29/hr per GPU on-demand as of Q1 2026, compared to AWS's $6.88/hr. The gap reflects Azure's cost structure, instance type design, and its enterprise market positioning. Both offer reserved instance discounts that narrow the gap for committed workloads. Azure's premium is partly offset by its deep Microsoft ecosystem integration and the OpenAI partnership that makes it the infrastructure backbone for Azure OpenAI Service deployments.

What is CoreWeave and why is it significant?

CoreWeave is a specialized GPU cloud provider focused entirely on AI infrastructure. It went public on March 28, 2025 at $40 per share with a $35 billion valuation. The company reported $1.9 billion in revenue for 2024 (737% year-over-year growth) and $5 billion for full-year 2025. NVIDIA owns 6% of CoreWeave. CoreWeave is significant because it represents the shift of serious AI training workloads away from general-purpose hyperscalers toward purpose-built GPU infrastructure, typically at 2-3x lower cost per GPU-hour.

Can I use AWS spot instances to reduce GPU cloud costs?

Yes. AWS EC2 spot instances for GPU workloads can be 40-70% cheaper than on-demand pricing. The trade-off is interruption: AWS can reclaim spot capacity with a 2-minute warning. Spot instances work well for checkpoint-aware training jobs that can resume from a saved state. They are not suitable for real-time inference serving, interactive workloads, or training runs without checkpointing logic. Azure Spot VMs and Google Cloud preemptible instances follow similar models.

What is the difference between a hyperscaler and a neocloud GPU provider?

A hyperscaler (AWS, Azure, Google Cloud) offers general-purpose cloud computing with GPUs as one product among hundreds. GPU instances are virtualized and come with the full cloud stack: managed services, compliance certifications, global regions, and enterprise support. A neocloud or specialized GPU cloud (CoreWeave, Lambda Labs, RunPod) exists solely to provide GPU compute, typically on bare-metal machines with InfiniBand networking. Neoclouds are 2-6x cheaper for raw GPU access but offer fewer managed services and fewer geographic regions.

Do cloud GPU providers offer NVIDIA H200 or B200 GPUs?

Yes. As of Q1 2026, AWS offers B200 instances (p6 series) at approximately $14.24/hr per GPU on-demand. Lambda Labs offers B200 instances at $4.99-5.29/hr. RunPod Secure Cloud offers B200 at $4.99/hr. CoreWeave offers H200 and GB200 (Blackwell generation) instances, with availability depending on reservation and contract terms. H200 and B200 deliver roughly 2-3x the AI inference throughput of H100.

What are the hidden costs of cloud GPU providers?

The main hidden costs are: data egress fees (AWS and Azure charge $0.08-0.09/GB for data leaving their clouds; a 10TB training dataset costs $800-900 in egress before a single GPU-hour is billed); fast storage add-ons (AWS EBS and Azure Premium SSD add $0.10-0.20/GB-month); networking between nodes (not all instance types include InfiniBand, which is required for efficient multi-GPU distributed training); and idle GPU billing (hourly billing means idle GPUs still cost, while Google Cloud per-second billing helps for short workloads).

Is RunPod community cloud reliable for AI workloads?

RunPod community cloud rents spare GPU capacity from individual hardware owners. Pricing is competitive ($0.50-2/hr for H100s in 2026), but there are no enterprise SLAs and availability is variable. It works well for batch jobs that tolerate occasional interruptions and experimental workloads where losing a run is acceptable. RunPod Secure Cloud uses dedicated data center infrastructure with defined uptime commitments. For production inference or time-sensitive training runs, Secure Cloud or a provider like Lambda Labs is a safer choice.

Related Articles

AI Training vs Inference: What's the Difference and Why the Cost Gap Is Growing

10 min read

NVIDIA H100 GPU: Full Specs, Price, and Cloud Rates for 2026

11 min read

NVIDIA H100 vs A100: Full Comparison and When to Upgrade

10 min read

What Is an AI Accelerator Card? Types, Specs, and Costs for 2026

10 min read