AI Training vs Inference: What's the Difference and Why the Cost Gap Is Growing

Key Numbers

Key Takeaways

- 1AI training builds a model by adjusting billions of parameters against a dataset. It runs once per model version. AI inference applies that fixed model to new inputs in real time. They require different hardware, different cluster sizes, and carry fundamentally different cost structures.

- 2GPT-4 training cost approximately $78M in compute (Epoch AI, 2025). ChatGPT's cumulative inference costs outpaced that total within the same year. Inference now accounts for 60-80% of AI compute spending in production systems (Kanerika, 2025).

- 3The AI inference market reached $106B in 2025 and is projected to hit $255B by 2030. Inference-time compute scaling, used in OpenAI o1 and DeepSeek R1, is shifting even more compute from training to inference.

AI training and AI inference are two distinct phases of the machine learning lifecycle, and they differ on almost every dimension that matters for cost and infrastructure planning. Training builds a model. Inference runs it.

Training is a one-time, compute-intensive process where a model learns from a large dataset by adjusting billions of internal parameters. GPT-4's training run consumed approximately $78 million in compute alone, running across an estimated 25,000 NVIDIA A100 GPUs for several months (Epoch AI, Stanford AI Index 2025). That was a single event. Inference is the ongoing process of answering queries using the finished model, and it never stops. By 2024, ChatGPT's cumulative inference costs had outpaced its total training cost within the same year.

This article explains how each phase works, why they require different hardware, what they cost in real terms in 2025 and 2026, and what the shift toward inference-time compute scaling means for the future of AI infrastructure spend.

In This Article

- 1Training and Inference: The Core Difference

- 2How AI Training Works: Compute, Hardware, and Scale

- 3How AI Inference Works: Latency, Volume, and Optimisation

- 4Training vs Inference Costs: The Real Numbers

- 5Hardware for Training vs Inference: Different Tools for Different Jobs

- 6Inference-Time Compute: The New Axis of AI Performance

- 7What This Means for Developers and Infrastructure Buyers

Training and Inference: The Core Difference

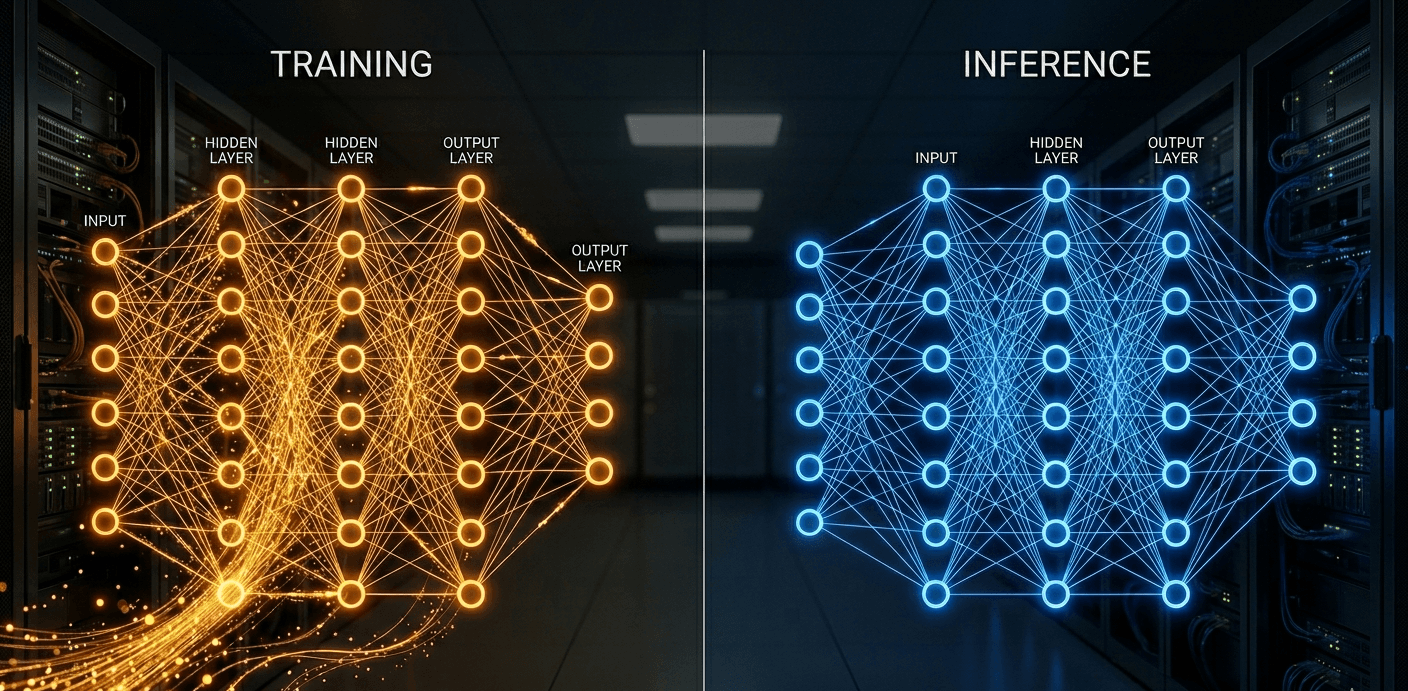

Training and inference represent opposite directions through the same neural network.

During training, the model processes a large dataset and uses an algorithm called backpropagation to adjust its internal weights after each batch of data. The goal is to minimise the difference between the model's predictions and the correct answers. This process repeats billions of times across billions of parameters until the model performs acceptably on a held-out validation set. Training happens offline, in batches, with no user waiting for a response. Speed matters, but it is measured in hours or days, not milliseconds.

During inference, the model's weights are frozen. No learning occurs. The model performs a single forward pass through the network: input comes in, weights are multiplied, activations propagate, and an output is produced. A ChatGPT response, a spam filter decision, and a fraud detection flag are all inference. Latency is measured in milliseconds. A user is waiting.

The Fundamental Difference in One Table

| Dimension | Training | Inference |

|---|---|---|

| What happens | Model learns from data, weights update | Frozen model processes new inputs |

| Direction through network | Forward pass + backward pass (backprop) | Forward pass only |

| Frequency | Once per model version (weeks to months) | Continuously in production (millions/day) |

| Latency requirement | None — batch process | Strict — users wait for responses |

| Compute duration | Days to months per run | Milliseconds per request |

| Primary cost driver | GPU-hours × cluster size | Volume × cost per query |

| When it fails | Model doesn't learn; waste of cluster time | Users get slow or wrong responses |

The common misconception is that inference is inherently cheaper than training. Per-request, it is. Cumulatively, it is not. A model trained once must answer millions of queries every day for years. The cumulative inference cost almost always exceeds the training cost, often within months of deployment.

How AI Training Works: Compute, Hardware, and Scale

Training a modern large language model is one of the most compute-intensive activities in commercial technology. The process requires thousands of GPUs or TPUs working in coordinated parallel, connected by high-bandwidth networks, running uninterrupted for weeks or months.

The core operation is backpropagation. For each batch of training data, the model makes predictions, compares them to ground truth labels, computes the error, and propagates that error backward through the network to update every parameter. For a model with 70 billion parameters, this means updating 70 billion numbers per batch. GPT-4 is estimated to have 1.76 trillion parameters, requiring corresponding scale.

Real Training Runs: Compute at Scale

- GPT-4: approximately 25,000 NVIDIA A100 GPUs running for several months; total compute cost approximately $78M (Epoch AI, Stanford AI Index 2025)

- Llama 3 (405B parameters, Meta, 2024): 16,000 NVIDIA H100 GPUs

- DeepSeek V3: approximately $5.6M in compute (Stanford AI Index, 2025), demonstrating that efficiency improvements can dramatically reduce training cost

- Gemini Ultra (Google): trained on custom TPU v5p pods rather than NVIDIA GPUs

The hardware requirement for training is high-memory, high-bandwidth GPUs designed for parallel matrix multiplication. NVIDIA's A100 (80GB HBM2e) and H100 (80GB HBM3) are the dominant training GPUs because high-bandwidth memory (HBM) allows the GPU to feed parameters and gradients fast enough to keep thousands of tensor cores busy. The interconnect between GPUs matters as much as the GPUs themselves: InfiniBand at 400 Gbps or higher is standard for large training clusters, because gradient synchronisation between thousands of GPUs requires network throughput that ethernet cannot provide.

Training clusters run inside hyperscale data centers, not in standard colocation facilities. The power density required for thousands of H100 GPUs drawing 700W each, the cooling infrastructure, and the network fabric are only available in purpose-built AI training facilities.

"The compute needed to train frontier AI models has doubled approximately every 6 months since 2010, significantly outpacing Moore's Law." (Stanford AI Index, 2025)

How AI Inference Works: Latency, Volume, and Optimisation

Inference is the production phase of AI. Once a model is trained, it is deployed to serve queries from real users, and the infrastructure it runs on is optimised for entirely different goals: low latency, high throughput, and cost per query.

A single inference request involves one forward pass through the model. For an LLM like GPT-4o, this means multiplying input tokens against billions of weight matrices, computing attention across the context window, and sampling output tokens one at a time until the response is complete. A 200-word response from GPT-4o involves generating roughly 270 tokens, each requiring a separate forward pass or speculative decoding step.

Why Inference Costs Add Up Faster Than Expected

The per-query cost is small. OpenAI priced GPT-4o at $2.50 per million input tokens and $10.00 per million output tokens as of 2025. At those rates, a single 500-token query costs approximately $0.00125 in input cost.

Multiplied across 100 million daily active users making five queries each at 500 tokens average, that becomes 250 billion input tokens per day, costing $625,000 per day in input alone, or approximately $228 million per year. GPT-4's entire training run cost $78M in compute. The inference bill for a single year of serving users at that scale is three times larger.

Key Inference Optimisation Techniques

- Quantisation: reducing weight precision from FP16 (16-bit) to INT8 (8-bit) or INT4 (4-bit). This halves or quarters model memory usage, allowing larger models to run on less memory, at some accuracy cost. INT8 quantisation typically reduces memory footprint by 50% with minimal accuracy degradation on most tasks.

- Batching: grouping multiple inference requests and processing them simultaneously. A single GPU handling 32 requests in parallel costs roughly 32x less per request than handling them sequentially.

- Model distillation: training a smaller student model to mimic a larger teacher model. GPT-4o-mini is a distilled variant that serves the majority of ChatGPT queries at a fraction of the cost.

- Speculative decoding: using a smaller fast model to draft token predictions that the large model verifies, reducing the number of expensive forward passes needed.

API prices fell 99.5% from GPT-4's original launch pricing to GPT-4o-mini (Stanford AI Index, 2025). That collapse was driven by these optimisation techniques, not by hardware becoming 1,000x cheaper.

Training vs Inference Costs: The Real Numbers

Training costs are large, visible, and one-time. Inference costs are small per query but continuous, and they compound into the dominant share of AI infrastructure spend within months of a model's deployment.

Training Cost Benchmarks

| Model | Parameters | Training Compute Cost | Hardware | Source |

|---|---|---|---|---|

| GPT-4 | ~1.76T | ~$78M | ~25,000 A100s | Epoch AI, Stanford AI Index 2025 |

| DeepSeek V3 | 671B | ~$5.6M | H800 clusters | Stanford AI Index, 2025 |

| Llama 3 405B | 405B | Not disclosed | 16,000 H100s | Meta, 2024 |

| Gemini Ultra | Not disclosed | Not disclosed | TPU v5p pods | Google, 2024 |

The $78M versus $5.6M comparison between GPT-4 and DeepSeek V3 is significant. It demonstrates that architectural efficiency improvements, not just more compute, determine training cost. DeepSeek achieved comparable performance to GPT-4-class models at roughly 7% of the training compute cost.

The Number Most Guides Don't Show

GPT-4o is priced at $2.50 per million input tokens. At 100 million daily active users making five queries of 500 tokens each, the daily input token volume is 250 billion tokens. At $2.50 per million, that is $625,000 per day in input costs alone, or $228 million per year. Add output tokens at $10.00 per million, and the annual inference cost at that usage level is well over $500 million. GPT-4's training cost $78M. The inference bill exceeds training within the first four months of production operation at scale.

This is why inference now accounts for 60 to 80% of total AI compute spending in production systems (Kanerika, 2025), and why the AI inference market is projected to reach $255 billion by 2030 from $106 billion in 2025 (Markets and Markets, 2025).

Inference Pricing Trends

According to Stanford's 2025 AI Index, API token prices fell 99.5% from GPT-4's original launch price to GPT-4o-mini. That dramatic reduction reflects optimisation at scale, not margin sacrifice. As models get more efficient and hardware improves, the per-token cost falls while total volume grows. The inference market expands even as the price per unit drops.

Hardware for Training vs Inference: Different Tools for Different Jobs

Training and inference do not use the same GPU. The requirements are different enough that NVIDIA offers separate product lines targeting each workload, though there is overlap in practice.

Training requires maximum memory bandwidth and capacity to handle large parameter matrices and gradient tensors simultaneously. The NVIDIA H100 SXM (80GB HBM3) is the current standard for frontier model training, with 3.35 TB/s memory bandwidth enabling fast weight reads during backpropagation. Its NVLink interconnect allows tight coupling in multi-GPU nodes. The predecessor A100 (80GB HBM2e, 2 TB/s) remains widely deployed for training workloads that do not require the latest generation.

Inference has different priorities. Throughput (requests per second) and latency (time per request) matter more than raw memory bandwidth for large batch sizes. The NVIDIA L40S uses GDDR6 memory rather than HBM, which is lower bandwidth but far cheaper per gigabyte, making it cost-effective for inference workloads that do not need to keep entire training-scale parameter sets resident. For consumer-facing AI applications, NVIDIA's H200 adds 141GB of HBM3e, specifically to allow larger models to run in a single GPU for inference without model parallelism overhead.

GPU Comparison: Training vs Inference Positioning

| GPU | Memory | Primary Role | Notes |

|---|---|---|---|

| NVIDIA A100 SXM | 80GB HBM2e | Training | Previous generation; still dominant in deployed training clusters |

| NVIDIA H100 SXM | 80GB HBM3 | Training | Current standard for frontier model training |

| NVIDIA H200 SXM | 141GB HBM3e | Training and large-model inference | Larger memory fits bigger models without sharding |

| NVIDIA L40S | 48GB GDDR6 | Inference and graphics | Lower cost per GB than HBM; high throughput for batched inference |

| NVIDIA B200 | 192GB HBM3e | Training and inference | Blackwell architecture; next-generation for both workloads |

For a detailed comparison of the H100 and A100 specifications, see our NVIDIA H100 vs A100 breakdown. For the full hardware landscape, our AI accelerator explained guide covers how GPUs, TPUs, and custom accelerators fit into the wider picture.

In practice, large-scale inference clusters at OpenAI, Anthropic, and Google use H100 and H200 GPUs because they already have them from training and the economics of a unified fleet outweigh the per-unit cost savings of using L40S for inference. Smaller inference deployments, hosting a 7B or 13B parameter model, run comfortably on NVIDIA RTX 4090 or A10G GPUs at a fraction of the cost.

Inference-Time Compute: The New Axis of AI Performance

A third category of AI compute is emerging alongside training and standard inference: inference-time compute scaling, also called test-time compute. It changes the cost equation for both phases.

Standard inference is a single forward pass per query. Inference-time compute scaling runs multiple forward passes, generates multiple candidate responses, evaluates them against a reward model or verifier, and returns the best answer. OpenAI's o1 and o3 models, and DeepSeek R1, use variants of this approach to achieve better performance on reasoning tasks without training a larger base model.

The trade-off is direct: more inference compute per query, in exchange for less training compute. Instead of training a model 10x longer to improve its mathematical reasoning, you run 10x more inference compute per maths query. For tasks where quality matters more than throughput (legal analysis, complex coding, scientific reasoning), this is an acceptable exchange. For high-volume, low-complexity tasks (autocomplete, translation), it is not.

The practical implication for infrastructure buyers is that inference-time compute scaling shifts cost from a one-time capital expenditure (training) to a recurring operational cost (inference). A model using inference-time compute may cost $0.05 per query rather than $0.001, but it produces outputs that previously required a much more expensive training run to achieve.

This shift is one reason the AI inference market is projected to grow at 19.2% annually through 2030 (Markets and Markets, 2025). Inference is not just the deployment tail of a training investment. It is increasingly the primary site of AI capability improvement.

What This Means for Developers and Infrastructure Buyers

The training versus inference distinction has direct consequences for how companies plan AI infrastructure spend.

If you are building with pre-trained models via API (OpenAI, Anthropic, Google), your cost is 100% inference. You pay per token, per request, or per GPU-hour of inference compute. Training costs are invisible to you. Your optimisation levers are model selection (GPT-4o vs GPT-4o-mini), prompt length reduction, caching repeated queries, and batching requests where latency allows.

If you are fine-tuning an existing model on proprietary data, you have a modest training cost (fine-tuning a 7B model costs a fraction of training from scratch) and then ongoing inference cost. The inference cost will dominate within weeks.

If you are training frontier models from scratch, you are one of a small number of organisations globally (OpenAI, Anthropic, Google, Meta, Mistral, a few others). Your training budget is likely $10M to $100M+ per run. Your inference infrastructure is a separate, ongoing investment that will exceed training cost within the first year.

For organisations evaluating cloud GPU providers for either workload, see our guide to cloud GPU providers compared. For the hyperscale facilities where training clusters run, see our hyperscale data center explained article.

The simplest framing for budget planning: treat training as a one-time capital event and inference as a variable operational cost that scales with usage. As your product grows, inference cost grows with it. Training cost, largely, does not.

Frequently Asked Questions

What is the difference between AI training and AI inference?

AI training is the process of building a machine learning model by exposing it to a large dataset and adjusting its internal parameters (weights) through backpropagation to minimise prediction error. It runs once per model version, takes days to months, and requires large GPU clusters.

AI inference is using the finished, trained model to generate predictions or responses on new inputs. The model's weights are frozen during inference. No learning occurs. Inference runs continuously in production, handling millions of queries per day, with strict latency requirements measured in milliseconds.

How much does it cost to train an AI model like GPT-4?

GPT-4 cost approximately $78 million in compute alone, running across an estimated 25,000 NVIDIA A100 GPUs for several months (Epoch AI, Stanford AI Index 2025). Total development cost, including staff and R&D, exceeded $100 million according to OpenAI CEO Sam Altman.

However, efficiency improvements have dramatically reduced training costs for newer models. DeepSeek V3, a comparable capability model, cost approximately $5.6 million in compute (Stanford AI Index, 2025), demonstrating that architectural innovation can achieve similar results at roughly 7% of the compute spend.

Smaller models cost proportionally less. Fine-tuning an existing 7B parameter open-source model on proprietary data can cost under $1,000 in GPU time.

Does inference or training cost more in total?

Inference costs more in total for any model that reaches significant scale. Training is a one-time cost per model version. Inference runs continuously for months or years at scale.

GPT-4 training cost approximately $78M in compute. ChatGPT's cumulative inference costs exceeded that total within 2024 alone, as inference now accounts for 60-80% of AI compute spending in production systems (Kanerika, 2025).

At GPT-4o's pricing of $2.50 per million input tokens, a deployment serving 100 million daily users making five 500-token queries each generates a daily inference bill of approximately $625,000 in input costs. That $78M training cost is recovered within four months of operation at that scale.

What hardware is used for AI training vs inference?

Training uses high-memory, high-bandwidth GPUs designed for parallel matrix operations and gradient synchronisation across large clusters. NVIDIA H100 SXM (80GB HBM3) and A100 SXM (80GB HBM2e) are the standard training GPUs. Multiple GPUs must be tightly connected via NVLink or InfiniBand at 400+ Gbps.

Inference uses a broader range of hardware depending on model size and throughput requirements. Large-scale production inference (serving millions of users) typically uses H100 or H200 GPUs for LLMs. Smaller models run on L40S, A10G, or consumer GPUs. Edge inference (on-device AI) uses purpose-built chips like Apple's Neural Engine, Qualcomm's NPU, or NVIDIA Jetson.

What is inference-time compute scaling?

Inference-time compute scaling (also called test-time compute) is a technique where a model generates multiple candidate responses, evaluates them using a reward model or verifier, and returns the best answer. Instead of running one forward pass per query, it runs many.

OpenAI's o1 and o3 models use this approach to improve performance on complex reasoning tasks without requiring a larger base model. DeepSeek R1 uses a similar chain-of-thought reasoning approach. The trade-off is direct: higher cost per query in exchange for better answer quality on difficult problems.

This technique shifts compute investment from training (building a better model) to inference (thinking harder per query), which is why the inference market is growing faster than the training market.

What is a token in AI inference, and how does it relate to cost?

A token is the basic unit of text that an LLM processes. Roughly speaking, one token equals about four characters or three-quarters of a word in English. "Hello world" is two tokens. A 500-word document is approximately 625 tokens.

AI API pricing is charged per token, separately for input (prompt) and output (response). OpenAI prices GPT-4o at $2.50 per million input tokens and $10.00 per million output tokens (2025). Output tokens are more expensive because generating each requires a separate forward pass, while input tokens are processed in parallel.

Understanding token pricing is essential for estimating inference cost: multiply your average prompt length in tokens by the input rate, and your average response length by the output rate.

Can inference be run on a consumer GPU or laptop?

Yes, with the right model size and quantisation. A 7B parameter model like Llama 3 8B, quantised to INT4 precision, fits in approximately 4-5GB of GPU memory and runs at acceptable speeds on a modern consumer GPU (NVIDIA RTX 3080 or 4060) or even on Apple Silicon Macs with unified memory.

Quantisation reduces model precision from 16-bit floats to 8-bit or 4-bit integers, cutting memory requirements by 50-75% with modest quality trade-offs on most tasks. Tools like Ollama and llama.cpp make running quantised models locally accessible without configuration expertise.

Frontier models like GPT-4 (1.76T parameters) cannot practically run on consumer hardware. They require data center GPU clusters with hundreds of gigabytes of high-bandwidth memory across multiple GPUs.

What percentage of AI compute spending goes to inference vs training?

As of 2024 to 2025, inference accounts for 60 to 80% of AI compute spending in production systems, with the balance going to training and fine-tuning (Kanerika, 2025). Forecasts for 2026 project inference rising to 70-90% of total compute spend as agentic AI workflows, which run multiple inference steps per task, become more prevalent.

This ratio has shifted significantly from the early deep learning era (2015-2020), when training was the primary cost centre for AI teams. The transition to inference dominance reflects the maturation of AI from research to deployed products serving hundreds of millions of users.

Related Articles

Hyperscale Data Center: What It Is, How It Works, and What It Costs

11 min read

Cloud GPU Providers Compared: Pricing, Speed, and Which to Use in 2026

12 min read

NVIDIA H100 GPU: Full Specs, Price, and Cloud Rates for 2026

11 min read

What Is an AI Accelerator Card? Types, Specs, and Costs for 2026

10 min read

What Is an LLM? Large Language Models Explained

10 min read

Is AI Bad for the Environment? The Real Numbers

10 min read