Hyperscale Data Center: What It Is, How It Works, and What It Costs

Key Numbers

Key Takeaways

- 1A hyperscale data center requires at least 5,000 servers, 10,000 sq ft of floor space, and 40 MW of power. Approximately 800 exist worldwide, with the US holding the largest share.

- 2Standard hyperscale construction costs $10.7M per MW in 2025, rising to $11.3M in 2026. AI-optimized builds exceed $20M per MW, putting a 100 MW AI facility at $2B or more before servers are installed.

- 3Every major frontier AI model, including GPT, Gemini, and Llama, was trained on hyperscale infrastructure. Microsoft alone committed $80B to AI-enabled hyperscale builds in fiscal year 2025.

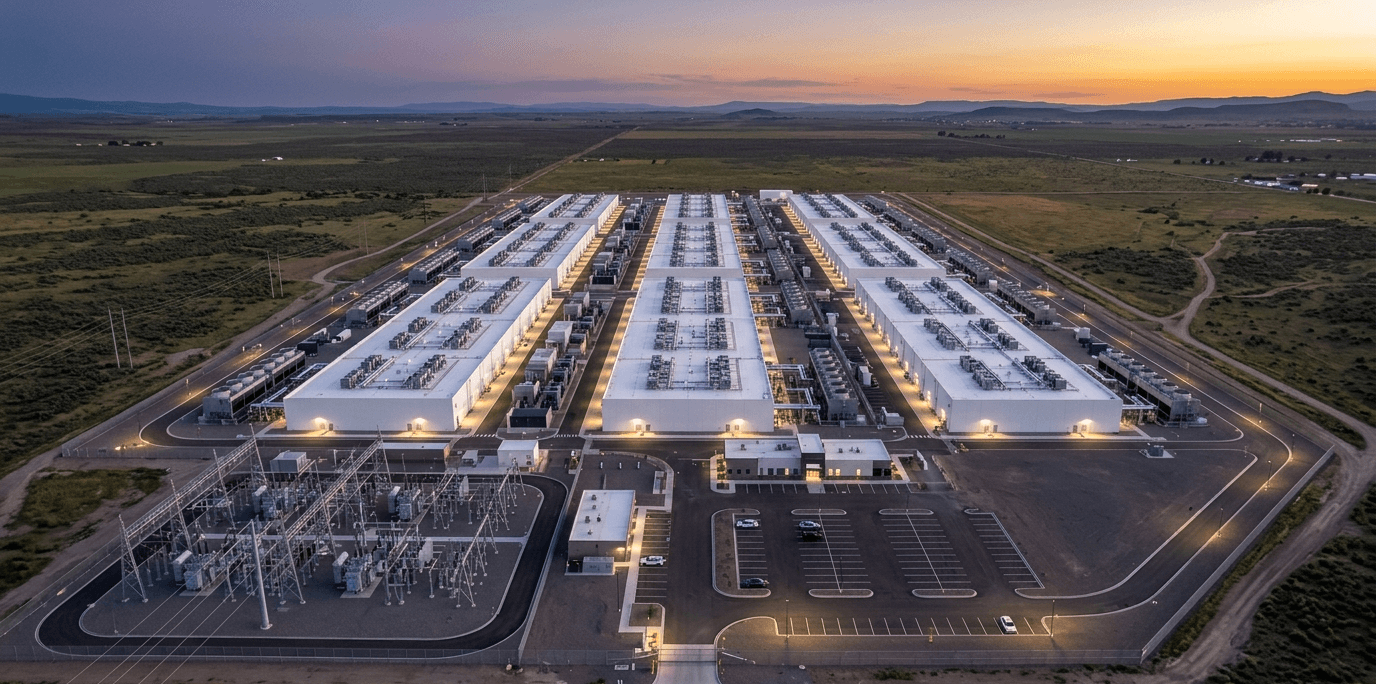

A hyperscale data center is a facility with at least 5,000 servers, 10,000 square feet of floor space, and 40 megawatts of power capacity, built to scale compute resources on demand rather than running at fixed capacity. Standard data centers are sized for a known workload. Hyperscale facilities are designed to grow continuously, adding tens of thousands of servers without rebuilding the facility around them.

The scale involved is difficult to picture. Microsoft holds more than 5 gigawatts of data center capacity globally as of early 2025, with plans to add 1.5 GW more. At the benchmark construction cost of $10.7M per megawatt (Turner & Townsend, 2025), that expansion alone represents a build commitment of roughly $16 billion for new capacity, before a single server is installed.

This article explains what makes a data center hyperscale, how these facilities are built and operated, which companies run the largest ones, and what it actually costs to build one in 2025 and 2026. It also covers why AI model training, specifically, requires hyperscale infrastructure and how these campuses are evolving toward gigawatt-scale builds that did not exist five years ago.

In This Article

- 1What Is a Hyperscale Data Center?

- 2How a Hyperscale Facility Is Built and Operated

- 3The Major Hyperscale Operators and Their Scale

- 4Construction Costs in 2025 and 2026

- 5Why AI Training Requires Hyperscale Infrastructure

- 6Hyperscale vs. Colocation vs. Enterprise: Key Differences

- 7AI Campuses and the Shift to Gigawatt-Scale Builds

What Is a Hyperscale Data Center?

A hyperscale data center meets three thresholds: at least 5,000 servers, at least 10,000 square feet of white floor space, and at least 40 MW of power capacity. Meeting all three is what separates hyperscale from large enterprise or colocation facilities.

The term was coined to describe the infrastructure built by companies like Google and Amazon to serve hundreds of millions of users simultaneously. A standard enterprise data center is designed for one organisation's known workload, sized to peak demand with a buffer. A hyperscale facility is designed around the assumption that demand will keep growing, and the building must accommodate that growth without major reconstruction.

Scalability is the defining characteristic, not just size. A hyperscale facility uses modular designs, often called pods or blocks, where each unit adds a fixed increment of compute, power, and cooling capacity. When a new pod is needed, it is added to the campus without disrupting existing operations. This approach makes hyperscale fundamentally different from a conventional data center, where adding 20% more capacity typically requires significant infrastructure work.

The largest hyperscale campuses today hold millions of servers across multiple buildings on a single site, with dedicated substations, cooling towers, and in some cases on-site power generation. The threshold numbers (5,000 servers, 40 MW) represent the entry point for the category, not the norm.

The Three Minimum Thresholds

| Threshold | Minimum | What It Implies |

|---|---|---|

| Server count | 5,000 | Enough to serve millions of concurrent users |

| Floor space | 10,000 sq ft | Multiple server halls with room to expand |

| Power capacity | 40 MW | Roughly equivalent to powering 30,000-40,000 homes |

Approximately 800 hyperscale data centers operate worldwide as of 2025, with the United States holding the largest national concentration. The US had 5,381 total data centers as of March 2024, encompassing hyperscale, colocation, and enterprise facilities combined.

How a Hyperscale Facility Is Built and Operated

Hyperscale data centers are built around four interconnected systems: power distribution, cooling, server infrastructure, and networking. Each system is designed for redundancy and rapid expansion.

Power Infrastructure

Power arrives from the utility grid at high voltage (typically 115 kV to 230 kV), is stepped down through on-site substations, and distributed to individual server racks. Hyperscale operators maintain N+1 or 2N redundancy on power systems, meaning if one power path fails, another takes the full load without interruption. Backup diesel generators provide protection against grid outages, with fuel stored on site for 24-72 hours of full-load operation.

Power Usage Effectiveness (PUE) measures how efficiently a facility converts incoming power into useful server compute. A PUE of 1.0 is theoretical perfection: every watt goes to the servers. Legacy data centers commonly run PUE of 1.5 to 2.0, wasting half their power on overhead. Hyperscale operators use economies of scale and custom cooling designs to achieve PUE of 1.1 to 1.2 across large fleets. Google reported a trailing 12-month average PUE of 1.10 across its global data center fleet (Google Sustainability Report, 2023), meaning only 10% of power is lost to overhead rather than the 50% common in older facilities.

Cooling Systems

Cooling is the single largest overhead cost in a data center. Hyperscale operators use several approaches depending on climate and workload density:

- Air cooling with precision computer room air conditioning (CRAC) units: standard for traditional server racks

- Evaporative cooling using outside air in cooler climates (Google Oregon, Microsoft Wyoming): reduces mechanical cooling cost substantially

- Direct liquid cooling (DLC): pipes cool water directly to server components, required for high-density GPU racks drawing 30-60 kW per rack

- Immersion cooling: servers submerged in non-conductive fluid, used for the densest AI workloads

AI GPU racks running NVIDIA H100 or H200 accelerators draw 10-20x the power of standard server racks. That density makes air cooling insufficient for AI-specific pods, which is why liquid cooling infrastructure accounts for a significant share of the cost premium in AI-optimized facilities.

Networking

Hyperscale facilities require ultra-low latency, high-bandwidth networking between racks, pods, and buildings on the same campus. During AI model training, thousands of GPUs must synchronise gradient updates continuously. Even microsecond-level latency differences cause training inefficiency. This requirement drives investment in 400 Gbps and 800 Gbps network fabrics within campuses, with 93% of hyperscale operators targeting 40 Gbps or faster connections for their core fabric (Uptime Institute, 2025).

The Major Hyperscale Operators and Their Scale

Five companies account for the majority of hyperscale capacity worldwide: Amazon Web Services, Microsoft Azure, Google Cloud, Meta, and Alibaba Cloud. Each operates hundreds of individual facilities across multiple continents.

| Operator | Primary Use | Key 2025 Commitment | Notes |

|---|---|---|---|

| Amazon Web Services | Cloud services, AI training | Part of Amazon's multi-year $150B+ capex commitment | Largest public cloud by revenue |

| Microsoft Azure | Cloud services, AI (OpenAI partnership) | $80B in FY2025 alone | Largest single-year announcement |

| Google Cloud | Cloud services, AI (Gemini, TPUs) | $75B planned capex for 2025 | Includes custom TPU infrastructure |

| Meta | Internal platforms, Llama AI training | Multi-campus builds including Hyperion (Louisiana) | No external cloud revenue; all internal |

| Alibaba Cloud | Cloud services, Asia-Pacific | Ongoing Southeast Asia expansion | Largest hyperscaler outside US and Europe |

Microsoft's commitment stands out for its scale and timeline. On January 3, 2025, Microsoft President Brad Smith announced the company was on track to invest approximately $80 billion in AI-enabled data centers in fiscal year 2025 (ending June 2025), with more than half directed to the United States.

"This infrastructure is the foundation on which AI innovation is being built." (Brad Smith, Microsoft President, January 2025)

Google matched with its own announcement of $75 billion in planned capital expenditure for 2025, a figure that includes both cloud data center expansion and its custom Tensor Processing Unit (TPU) hardware programs.

The US concentration of hyperscale investment follows available land, power infrastructure, and proximity to major internet exchange points. Northern Virginia (known as Data Center Alley) has historically hosted the densest concentration, though power constraints are pushing new builds to the Southeast, Midwest, and Pacific Northwest.

Construction Costs in 2025 and 2026

Building a hyperscale data center has become substantially more expensive over the past three years. The average cost per square foot surpassed $1,000 for the first time in 2025, reaching $1,033 by year-end (ConstructConnect, 2025), compared to $535 in 2023. That near-doubling reflects tight supply chains for electrical gear, high demand for specialised construction crews, and the premium required for AI-ready power and cooling infrastructure.

Benchmark Construction Costs

| Facility Type | Cost per MW (2025) | Cost per MW (2026 forecast) | Notes |

|---|---|---|---|

| Standard hyperscale | $10.7M | $11.3M | Shell-and-core, non-AI workloads |

| AI-optimized hyperscale | $20M+ | ~$22M+ | Liquid cooling, high-voltage distribution, dense racks |

| AI campus (GW scale) | $45-55B per GW | Consistent with above | Full ecosystem: power, land, connectivity, equipment |

Source: Turner & Townsend Data Centre Construction Cost Index, 2025-2026.

The Number Most Guides Don't Show

At $10.7M to $11.3M per MW, a 100 MW standard hyperscale facility costs between $1.07B and $1.13B for the shell-and-core structure. Add full electrical fit-out, cooling systems, and fire suppression, and total project cost reaches $1.6B to $2.2B before a single server enters the building.

For AI-optimized facilities at $20M+ per MW, that same 100 MW footprint costs $2B or more in construction alone. The difference comes almost entirely from liquid cooling infrastructure and the higher-voltage power distribution required for GPU racks drawing 30-100 kW per rack, versus 5-10 kW for standard compute racks.

Microsoft's $80B commitment for fiscal year 2025 translates, at the AI-optimized benchmark of $20M per MW, to enough physical capacity for approximately 4,000 MW (4 GW) of new AI-ready data center space. That figure is consistent with the company's reported pipeline of 5+ GW total capacity.

The industry as a whole reflected this pace: US data center construction starts reached $77.7B in 2025, up 190% from the prior year (ConstructConnect, 2025). In October 2025 alone, US companies broke ground on 23 data center sites totalling nearly $11 billion, compared to $2.9 billion in October 2024.

Why AI Training Requires Hyperscale Infrastructure

Training a frontier AI model requires a scale of compute that only hyperscale infrastructure can provide. This is not about raw server count. It is about having thousands of high-performance GPUs or TPUs connected by a fast enough network that they can work together as a single computational unit.

GPT-4 was trained on an estimated 25,000 NVIDIA A100 GPUs running continuously for months. Gemini Ultra required a custom TPU pod architecture at Google that exists in no other context. Llama 3 (405B parameters) was trained on Meta's own hyperscale infrastructure using 16,000 H100 GPUs. None of these training runs were possible on anything smaller than a purpose-built hyperscale facility, because the inter-GPU communication during training requires network latency measured in microseconds across thousands of devices.

Inference, which is the process of answering a user query using a trained model, can run at smaller scale. A single H100 GPU can handle hundreds of simultaneous inference requests. But training a new frontier model is a different problem entirely. It requires:

- Thousands of co-located GPUs connected by high-bandwidth, low-latency networking (InfiniBand or RoCE at 400+ Gbps)

- Uninterrupted power delivery for weeks or months, with zero tolerance for outages mid-training

- Cooling systems that handle 30-100 kW per rack across thousands of racks simultaneously

- Software infrastructure to checkpoint, restart, and manage a training run across hardware failures

When you use ChatGPT or Claude, your request travels to one or a small number of GPUs in an inference cluster. That cluster could, in principle, sit in a colocation facility. The model those GPUs are running, however, was created in a hyperscale training facility that no colocation operator currently builds.

This distinction explains why Meta is constructing the Hyperion campus in Louisiana at a scale that analysts estimate at several gigawatts of AI compute capacity. It is not primarily to serve more users. It is to train the next generation of Llama models at a scale that requires an entire power plant's worth of electricity.

For a technical breakdown of the GPUs used inside these facilities, see our analysis of NVIDIA H100 specifications and pricing.

Hyperscale vs. Colocation vs. Enterprise: Key Differences

The three main data center types serve different purposes and different customers. The differences matter when evaluating where to run workloads.

| Factor | Hyperscale | Colocation | Enterprise |

|---|---|---|---|

| Who builds it | The operator (AWS, Google, Meta) | A third-party provider (Equinix, Digital Realty) | The company that uses it |

| Who owns the servers | The operator | The customer (renting space and power) | The company |

| Typical customers | Internal cloud and AI workloads | Enterprises outsourcing IT | One organisation's internal IT |

| Minimum size | 40 MW, 10,000 sq ft | 1-50 MW typical | Varies widely |

| Scalability | Modular pods added on campus | Rent more space and cages | Limited by existing build |

| Power per rack | 30-100 kW (AI GPU racks) | 5-15 kW typical | 3-10 kW typical |

| Cost to customer | Priced as cloud compute ($/GPU-hour) | Priced as space and power ($/kW/month) | Capex and opex of owning hardware |

A company that needs 100 servers has no reason to build a hyperscale facility. A startup running AI inference can use colocation or rent GPU time from a cloud provider. Hyperscale investment is what happens when AWS needs 100,000 additional servers in a new region, or when Meta needs a training cluster that does not yet exist anywhere in the world.

For a detailed comparison of colocation pricing, power options, and tier ratings, see our article on colocation data centers explained.

The practical implication for most organisations: they interact with hyperscale infrastructure every time they use AWS, Azure, Google Cloud, or any SaaS product built on those platforms. They do not build hyperscale themselves. Hyperscale is infrastructure at a cost and scale that only makes sense for companies serving hundreds of millions of users or training the world's most compute-intensive models.

AI Campuses and the Shift to Gigawatt-Scale Builds

The next phase of hyperscale development has a different name: the AI campus. Where a hyperscale data center is measured in megawatts (40 MW to 500 MW), an AI campus is planned in gigawatts (1 GW to 5 GW), with multiple buildings, a dedicated power substation, and in some cases on-site generation from solar, gas, or nuclear sources.

At the campus scale, the economics shift. Fully built-out AI campuses with power, land, connectivity, and equipment cost $45B to $55B per gigawatt (Turner & Townsend, 2025). A 5 GW campus, at the upper end of that range, represents a development value approaching $275B over its build-out period.

"The infrastructure investment cycle in AI is unlike anything we have seen before. Hyperscale operators are committing capital at a pace that would have seemed implausible three years ago." (Turner & Townsend, Data Centre Construction Cost Index, 2026)

The global investment trajectory supports this scale. Companies worldwide are expected to invest nearly $7 trillion cumulatively in data center infrastructure through 2030, with more than 40% directed to the United States. More than $4 trillion of that total is allocated to computing hardware rather than real estate and power.

Over 60 data center projects with a combined value exceeding $50 billion were expected to break ground in the first half of 2026, a pace that represents a multi-year acceleration driven almost entirely by demand for AI training and inference capacity. In October 2025 alone, US companies invested nearly $11 billion in 23 new data center construction sites (ConstructConnect, 2025).

The result is that hyperscale is no longer the ceiling for data center scale. It is, increasingly, the floor. The facilities being planned today are categorically larger than what the term described when it was coined a decade ago. For context on the hyperscaler companies making these investments and how their cloud businesses are structured, see our hyperscalers explained guide.

Frequently Asked Questions

What is the minimum size of a hyperscale data center?

The minimum threshold for a hyperscale data center is 5,000 servers, 10,000 square feet of floor space, and 40 megawatts of power capacity. All three criteria must be met. Facilities meeting only one or two thresholds are classified as large enterprise or regional data centers, not hyperscale.

In practice, most hyperscale campuses built since 2022 far exceed these minimums. A 40 MW entry-level hyperscale facility is now considered small. New AI-focused builds typically plan for 100 MW to 500 MW per campus, with the largest projects targeting multiple gigawatts.

How much does it cost to build a hyperscale data center in 2025?

Building a standard hyperscale data center costs approximately $10.7 million per megawatt in 2025, rising to $11.3 million per MW in 2026 (Turner & Townsend). A 100 MW facility costs $1.07B to $1.13B for the shell-and-core structure, and $1.6B to $2.2B fully fitted out with electrical, cooling, and fire suppression systems.

AI-optimized facilities cost $20 million per MW or more, because liquid cooling infrastructure and high-voltage distribution for GPU racks substantially increase cost per unit. A 100 MW AI-ready facility costs $2B or more in construction before servers are purchased.

For full campus builds at gigawatt scale, analyst estimates range from $45B to $55B per gigawatt (Turner & Townsend, 2025), encompassing power, land, connectivity, and equipment.

How many hyperscale data centers are there worldwide?

Approximately 800 hyperscale data centers operate worldwide as of 2025. The United States holds the largest national concentration, with Northern Virginia historically the densest single market globally. Other major hyperscale markets include Ireland, Singapore, Germany, Japan, and Australia.

The count is growing rapidly. US data center construction starts reached $77.7 billion in 2025, up 190% from the prior year, with more than 60 major projects expected to break ground in the first half of 2026 alone (ConstructConnect, 2025).

What is PUE and what is a good PUE for a hyperscale data center?

PUE stands for Power Usage Effectiveness. It measures the ratio of total facility power to the power consumed by IT equipment. A PUE of 1.0 is theoretical perfection: every watt entering the facility reaches the servers. A PUE of 2.0 means half the power is lost to cooling, lighting, and other overhead.

Legacy data centers commonly run PUE of 1.5 to 2.0. Hyperscale operators target 1.1 to 1.2 through economies of scale and custom cooling designs. Google reported a trailing 12-month global fleet average PUE of 1.10 (Google Sustainability Report, 2023), meaning only 10% of facility power is lost to overhead rather than the 50% common in older facilities.

What is the difference between a hyperscale and a colocation data center?

A hyperscale data center is built and operated by a single company (like AWS or Meta) for its own workloads. The operator owns the building, the power infrastructure, and the servers. Colocation data centers are built by a third party (like Equinix or Digital Realty) and rented to multiple customers, who bring their own servers and pay for space, power, and connectivity.

Hyperscale facilities are not available for rent. Access to hyperscale compute is sold as cloud services (virtual machines, GPU instances) rather than as physical space.

Why do AI models need to be trained on hyperscale infrastructure?

Training a frontier AI model requires thousands of GPUs working together as a single computational unit. GPT-4 was estimated to use approximately 25,000 NVIDIA A100 GPUs running continuously for months. Llama 3 (405B parameters) used 16,000 H100 GPUs at Meta's own facilities.

This scale requires three things that only hyperscale facilities provide: co-located GPU clusters connected by ultra-low-latency networking (400+ Gbps InfiniBand), uninterrupted power delivery for weeks or months, and cooling systems handling 30-100 kW per rack across thousands of racks. Inference (serving queries with a trained model) can run on smaller infrastructure. Training a new frontier model cannot.

Where are most hyperscale data centers located?

The United States has the highest concentration of hyperscale data centers globally. As of March 2024, the US had 5,381 total data centers across all types, with Northern Virginia (Data Center Alley) historically the densest single market in the world.

Power availability is the primary constraint on location. As Northern Virginia reaches capacity limits, new hyperscale and AI campus builds are moving to the US Southeast, Midwest, and Pacific Northwest. Outside the US, Ireland, Singapore, Germany, and Japan are the largest hyperscale markets by facility count.

What is a hyperscale AI campus and how is it different from a standard hyperscale data center?

A hyperscale AI campus is a multi-building development at gigawatt scale (1 GW to 5 GW of IT load), built specifically for AI training workloads. Standard hyperscale data centers operate at 40 MW to 500 MW. An AI campus is an order of magnitude larger and includes its own dedicated power infrastructure, sometimes with on-site generation.

Full AI campus builds including power, land, connectivity, and equipment cost $45B to $55B per gigawatt (Turner & Townsend, 2025). A 5 GW AI campus at the upper end of that range represents a development value approaching $275B.

Related Articles

What Is a Hyperscaler? Hyperscale Data Centers Explained

9 min read

AI Training vs Inference: What's the Difference and Why the Cost Gap Is Growing

10 min read

Cloud GPU Providers Compared: Pricing, Speed, and Which to Use in 2026

12 min read

What Is a Colocation Data Center? Costs and How It Works

11 min read