CoreWeave Explained: The AI Cloud Company Behind the GPU Boom

Key Numbers

Key Takeaways

- 1CoreWeave is an AI cloud company that rents NVIDIA GPU compute to AI labs and enterprises. It started as a crypto mining operation in 2017 and pivoted to GPU cloud services in 2019 after the crypto crash.

- 2CoreWeave generated $1.92 billion in revenue in 2024, a 738% increase from $229 million in 2023. It went public on Nasdaq (CRWV) on March 28, 2025 at $40 per share, with a $23 billion fully diluted valuation.

- 3CoreWeave runs H100 GPU clusters at 30-60% below AWS and Google Cloud prices. Microsoft alone accounts for roughly two-thirds of CoreWeave's revenue, making customer concentration one of its most cited financial risks.

CoreWeave is an American cloud computing company that rents NVIDIA GPU infrastructure to AI developers, research labs, and enterprises. It does not build AI models. It builds and operates the data centers and GPU clusters that AI companies need to train and run those models. Its main pitch is simple: access to NVIDIA H100 and H200 GPUs at prices that undercut Amazon Web Services, Google Cloud, and Microsoft Azure by 30 to 60%.

The company started in 2017 as Atlantic Crypto, mining Ethereum on NVIDIA GPUs. After the crypto crash of 2019, founders Michael Intrator, Brian Venturo, and Brannin McBee pivoted to renting that GPU inventory to AI researchers. That timing proved exact. The pivot happened just before the AI boom created a global shortage of NVIDIA compute. CoreWeave went from $229 million in annual revenue in 2023 to $1.92 billion in 2024, a 738% increase in one year (CoreWeave S-1 filing, 2025).

This article explains what CoreWeave does, how it prices GPU compute against AWS and Google Cloud, what its data center footprint looks like, who its customers are, how its IPO played out in March 2025, and what the financial risks of its model are.

In This Article

- 1What Is CoreWeave?

- 2From Ethereum Mining to AI Cloud: CoreWeave's Origin

- 3CoreWeave's Data Centers and GPU Fleet

- 4CoreWeave GPU Pricing: How It Compares to AWS, Google Cloud, and Lambda Labs

- 5CoreWeave IPO: What Happened in March 2025

- 6Revenue, Losses, Debt, and Customer Concentration

- 7Why CoreWeave Matters for AI Development

- 8Three Things People Get Wrong About CoreWeave

What Is CoreWeave?

CoreWeave is a cloud computing company specializing in GPU infrastructure for AI workloads. It operates its own data centers stocked with NVIDIA H100 and H200 GPUs, and it rents access to those GPUs by the hour, the day, or under long-term contracts.

The company is often called an "AI hyperscaler" or a "GPU cloud." The distinction from traditional cloud providers like AWS or Google Cloud is narrow but meaningful: CoreWeave is purpose-built for GPU-heavy workloads. It does not offer the broad portfolio of services those companies do. No databases, no app hosting, no content delivery. Just accelerated compute, primarily aimed at training large language models, fine-tuning AI systems, and running AI inference at scale.

CoreWeave's infrastructure runs on Kubernetes and its own cluster management tools, allowing customers to provision large GPU clusters in minutes rather than waiting weeks for hardware delivery. That speed matters when an AI lab needs to scale from 100 GPUs to 10,000 for a training run and then scale back down.

| Feature | CoreWeave | AWS | Google Cloud | Lambda Labs |

|---|---|---|---|---|

| GPU focus | AI-specific (H100/H200) | Broad portfolio | Broad portfolio | AI-specific |

| H100 on-demand price | $4.25/hr | $12.29/hr | $6.98/hr | $3.32/hr |

| A100 80GB price | $2.21/hr | $4.10/hr | $3.67/hr | $1.48/hr |

| Contract options | Spot, on-demand, reserved | All types | All types | On-demand |

| Primary customers | AI labs, enterprises | All industries | All industries | Researchers, startups |

| Data center regions | US, UK | Global | Global | US |

CoreWeave's position in that table is specific: more expensive than the smallest GPU rental marketplaces like Lambda Labs and RunPod, but significantly cheaper than hyperscalers for the GPU types AI labs actually need. That is the business model.

From Ethereum Mining to AI Cloud: CoreWeave's Origin

The CoreWeave story starts in 2017 with NVIDIA GPUs, but not for AI. Michael Intrator, Brian Venturo, Brannin McBee, and Peter Salanki founded Atlantic Crypto to mine Ethereum. GPUs are efficient for proof-of-work mining, and the four founders assembled a large GPU fleet during the crypto bull market.

The Ethereum crash in 2018 and 2019 left them with depreciated hardware and a choice: liquidate or find another use. They found another use. AI researchers at the time were posting on forums about how hard it was to get GPU time without six-month waits from major cloud providers. Atlantic Crypto rebranded as CoreWeave in 2019 and started renting its existing GPU inventory to machine learning researchers.

The timing was better than they knew. GPT-3 launched in 2020, demonstrating that scale was everything in AI. Demand for GPU compute accelerated through 2021 and 2022. By the time ChatGPT launched in November 2022 and triggered a global AI investment boom, CoreWeave had been building GPU infrastructure for three years and had existing supply at a moment when NVIDIA chips were backordered for 12 months or more.

Key milestones:

- 2017: Founded as Atlantic Crypto for Ethereum mining

- 2019: Pivoted to AI cloud services, rebranded as CoreWeave

- 2022: Secured first major enterprise contracts as ChatGPT created GPU demand surge

- 2023: Revenue reached $229 million

- May 2024: Raised $1.1 billion led by Coatue Management at a $19 billion valuation

- October 2024: Cisco invested; Bloomberg reported valuation at $23 billion. Goldman Sachs, JPMorgan Chase, and Morgan Stanley provided a $650 million credit line for data center expansion

- March 28, 2025: IPO on Nasdaq at $40 per share

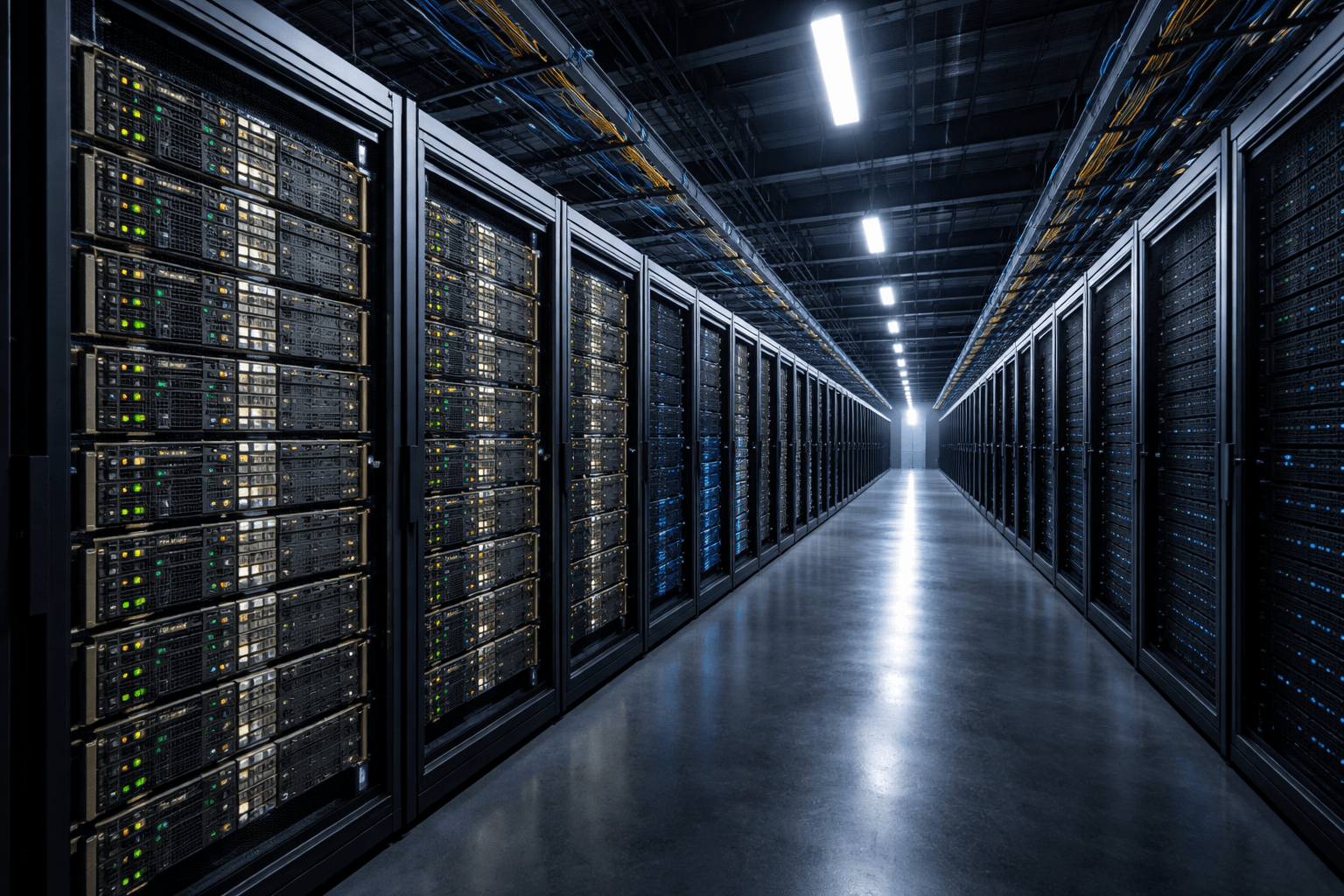

CoreWeave's Data Centers and GPU Fleet

CoreWeave operates data centers in the United States and the United Kingdom. Its two largest known facilities are in New Jersey and Texas.

In 2024, CoreWeave signed a $1.2 billion lease for a 280,000 square foot data center in Kenilworth, New Jersey. That same year, it built a $1.6 billion facility in Plano, Texas in partnership with NVIDIA. When the Plano facility opened, NVIDIA described it as the world's fastest AI supercomputer at the time. The UK expansion added two data centers, part of a broader push into European markets where AI compute demand was outpacing local supply.

CoreWeave's GPU inventory is heavily weighted toward NVIDIA's professional AI accelerators:

- NVIDIA H100 SXM5 and PCIe: The primary GPU for large model training. CoreWeave offers both configurations, with the SXM5 variant delivering higher interconnect bandwidth for multi-GPU training jobs.

- NVIDIA H200: The successor to the H100, with faster HBM3e memory bandwidth. CoreWeave added H200 capacity through 2024 and 2025.

- NVIDIA A100 80GB: An older generation used for inference workloads and fine-tuning tasks where H100 density is not required.

The company's Kubernetes-based orchestration layer allows customers to request clusters of 8, 64, 256, or more GPUs and get them provisioned quickly. Most AI training jobs require hundreds to thousands of GPUs running in parallel. CoreWeave's ability to deliver large clusters on short notice is the core operational advantage it holds over traditional cloud providers that allocate GPU capacity across general-purpose workloads.

According to NVIDIA, which holds a 6% stake in CoreWeave, the company is one of its largest customers for data center GPU products. CoreWeave's infrastructure buildout has been largely funded by debt, with $10 billion in total financing as of its IPO filing.

CoreWeave GPU Pricing: How It Compares to AWS, Google Cloud, and Lambda Labs

CoreWeave's pricing is positioned between the cheapest GPU rental marketplaces and the major cloud providers. The gap between CoreWeave and AWS is large enough to matter for large-scale AI workloads.

On-Demand GPU Pricing Comparison (Q1 2025)

| GPU Model | CoreWeave | Lambda Labs | Google Cloud | AWS |

|---|---|---|---|---|

| NVIDIA H100 80GB | $4.25/hr (PCIe) | $3.32/hr | $6.98/hr | $12.29/hr |

| NVIDIA A100 80GB | $2.21/hr | $1.48/hr | $3.67/hr | $4.10/hr |

| 8x H100 node (full) | $49.24/hr | ~$26.56/hr | ~$55.84/hr | ~$98.32/hr |

Source: CoreWeave pricing page (April 2025), Lambda Labs, Google Cloud, AWS published rates.

For an AI lab running a 512-GPU H100 training job for 30 days, the difference between CoreWeave and AWS works out to roughly $2.4 million. That is a meaningful cost gap for startups and mid-sized AI companies that are not large enough to negotiate private contracts with AWS at volume discounts.

The Number Most Guides Don't Show

CoreWeave's pricing advantage disappears at smaller scales. A single H100 on RunPod costs $2.34/hr. On Lambda Labs, $3.32/hr. CoreWeave charges $4.25/hr for on-demand. CoreWeave is not the cheapest GPU rental option. It is cheaper than the hyperscalers specifically, and it offers something the cheapest providers cannot: large clusters of hundreds or thousands of H100s available on short notice, with managed networking and Kubernetes orchestration included.

The economics work like this: an AI lab that needs 1,024 H100s for a three-month training run could pay approximately $93.2 million on AWS, or $55.2 million on CoreWeave. That $38 million difference is why Microsoft, despite building its own data centers, still sends workloads to CoreWeave. The capacity simply does not exist in sufficient quantity elsewhere.

Long-term reserved contracts are cheaper than on-demand rates at CoreWeave. The company does not publish reserved pricing, but customers like Microsoft and OpenAI have signed multi-year contracts that lock in capacity at negotiated rates below the listed on-demand prices.

CoreWeave IPO: What Happened in March 2025

CoreWeave went public on the Nasdaq stock exchange on March 28, 2025 under the ticker symbol CRWV. The IPO priced at $40 per share, giving the company a fully diluted valuation of $23 billion at launch.

The first day of trading ended flat, with shares closing at $40. That quiet open surprised some observers given the AI hype surrounding the company. Within three trading days, however, the stock had reached $52.57, a 31% gain. By June 2025, CoreWeave shares had surged more than 250% from the IPO price, pushing the market capitalization near $70 billion at the peak.

Major Shareholders at IPO

| Shareholder | Stake |

|---|---|

| Magnetar Capital | 34.5% of Class A shares |

| Fidelity | 7.6% |

| NVIDIA | 6% |

| Michael Intrator (CEO) | Undisclosed |

NVIDIA's 6% stake is notable. It means the world's dominant AI chip maker has a direct financial interest in CoreWeave's success. NVIDIA sells GPUs to CoreWeave, and CoreWeave's growth drives more GPU purchases. That relationship runs deep: NVIDIA has publicly described CoreWeave as a strategic partner and helped fund the Plano, Texas facility.

Bing searches for "coreweave stock" spiked from 380 per month in February 2025 to 11,240 in March 2025, the month of the IPO. That search volume reflects genuine investor curiosity: CoreWeave is one of the few pure-play AI infrastructure companies available on public markets, and investors who missed the NVIDIA run were looking for the next direct exposure to GPU demand.

"CoreWeave was built for this moment. The demand for GPU compute is not a trend. It is a permanent shift in how computation works." (Michael Intrator, CoreWeave CEO, March 2025)

Revenue, Losses, Debt, and Customer Concentration

CoreWeave's financial profile is unusual for a company valued at $23 billion. It is growing at a rate that few technology companies ever reach, while carrying risks that most investors would not accept from a slower-growing business.

Revenue Growth

| Year | Revenue | Change |

|---|---|---|

| 2023 | $229M | — |

| 2024 | $1.92B | +738% |

A 738% revenue increase in a single year is one of the fastest growth rates ever reported by a technology company at this scale. It is not sustainable at that rate, but it reflects the environment CoreWeave benefited from: the AI boom created demand faster than any existing cloud provider could serve it.

The Customer Concentration Problem

Microsoft alone contributed approximately two-thirds of CoreWeave's 2024 revenue. The top two customers together accounted for 77% of total sales (CoreWeave S-1, 2025). This is an extreme concentration. Most publicly traded cloud companies aim to keep their largest single customer below 10% of revenue to avoid the risk of one contract renewal derailing the entire business.

The risk is real. If Microsoft decides to bring more workloads in-house, reduces its spend with CoreWeave, or renegotiates contract terms aggressively at renewal, the impact on CoreWeave's revenue would be immediate and large.

The Debt Load

CoreWeave carried $10 billion in debt financing as of the IPO. The debt was used to purchase GPU hardware, which depreciates over time, and to fund data center construction, which generates returns over 10 to 15 years. The resulting debt-to-revenue ratio of 5.2:1 is exceptionally high by technology company standards. Most profitable tech companies maintain ratios below 1:1.

The math is straightforward: CoreWeave borrowed roughly $67,000 per GPU it operates if the fleet is around 150,000 units, or $40,000 per GPU if it is closer to 250,000 units. NVIDIA H100 GPUs were selling for $25,000 to $40,000 on the secondary market in 2024 and 2025. The debt load is roughly equivalent to the replacement value of the GPU fleet, with limited buffer for depreciation.

CoreWeave posted an $863 million net loss in 2024 alongside its $1.92 billion in revenue. The losses reflect the capital intensity of the business: GPUs, power infrastructure, and data center leases all require upfront investment before they generate returns.

"The company's rapid revenue growth demonstrates clear market demand, but the customer concentration and debt levels warrant careful monitoring." (S&P Global analysis, 2025)

Why CoreWeave Matters for AI Development

When a company like Mistral, Cohere, or a well-funded AI startup needs to train a new foundation model, it needs hundreds of NVIDIA H100s running in parallel for weeks or months. Building that infrastructure from scratch takes 12 to 24 months. Buying time on AWS or Google Cloud is available but expensive. Waiting for NVIDIA hardware to ship is an option, but lead times for H100s stretched to 8 to 12 months during 2023 and 2024.

CoreWeave sits in that gap. It buys GPUs in volume, builds the data centers, handles the orchestration, and rents out clusters on timescales from hours to years. For AI labs that need compute now rather than eventually, that is a meaningful offer.

The AI training workloads CoreWeave supports are the same workloads that produced GPT-4, Gemini, Llama 3, and Claude. Specifically:

- Pre-training: Training a foundation model from scratch on trillions of tokens of text. This requires thousands of GPUs running continuously for weeks. The interconnect bandwidth between GPUs matters more than individual GPU performance at this scale.

- Fine-tuning: Adapting an existing model to a specific domain or task. Smaller scale than pre-training, but still GPU-intensive. CoreWeave is cost-competitive with AWS for this workload category.

- Inference at scale: Running a trained model in production to serve user requests. H100s are overbuilt for most inference tasks, but for high-throughput applications serving millions of users, they provide the best latency.

CoreWeave's future depends on NVIDIA continuing to dominate AI chip supply, on demand for GPU compute staying high, and on its customer concentration improving. If any of those three change materially, the business model faces stress. For now, it operates in one of the highest-growth niches in technology, supplying the compute that makes AI possible. For related reading on the GPU types that power CoreWeave's fleet, see our NVIDIA H100 specs and pricing breakdown and comparison with the NVIDIA A100.

According to S&P Global Market Intelligence, the global AI cloud infrastructure market is projected to grow from $XX billion in 2024 to over $300 billion by 2030, driven by demand from hyperscalers, AI labs, and enterprise AI deployment at scale.

Three Things People Get Wrong About CoreWeave

1. CoreWeave is not an AI company

CoreWeave does not build AI models. It does not own any AI intellectual property. It is an infrastructure company that rents computing hardware. The distinction matters for investors: CoreWeave's revenue depends on GPU utilization rates, contract renewal risk, and power costs, not on model performance or AI research breakthroughs. If a better GPU becomes available and CoreWeave's H100 fleet becomes obsolete faster than expected, the business model is affected. That is a different risk profile from companies like OpenAI, Anthropic, or Google DeepMind.

2. CoreWeave is not always cheaper than alternatives

CoreWeave is cheaper than AWS and Google Cloud for H100 and H200 GPUs. It is more expensive than Lambda Labs and RunPod for the same hardware. The advantage CoreWeave offers at scale is cluster availability, not the lowest per-hour price. For researchers running small experiments, Lambda Labs at $3.32/hr beats CoreWeave at $4.25/hr on H100s. For AI companies needing 512 GPUs for three months, CoreWeave's pricing and cluster management become competitive.

3. Microsoft is not a passive customer

Microsoft contributed roughly two-thirds of CoreWeave's 2024 revenue. This is not a generic cloud-to-cloud relationship. Microsoft has invested in OpenAI, which needs massive GPU compute for training and inference. CoreWeave provides that compute in part. The relationship between Microsoft, OpenAI, and CoreWeave is interlocking. If Microsoft's relationship with OpenAI changes, CoreWeave's revenue is affected. The customer concentration risk and the Microsoft-OpenAI dependency are effectively the same risk.

Frequently Asked Questions

What does CoreWeave do?

CoreWeave rents NVIDIA GPU compute infrastructure to AI companies, research labs, and enterprises. It operates its own data centers filled with H100 and H200 GPUs and provides access to large GPU clusters by the hour or under long-term contracts. It does not build AI models or offer general cloud services like databases or app hosting.

When did CoreWeave go public?

CoreWeave went public on the Nasdaq stock exchange on March 28, 2025 under the ticker symbol CRWV. The IPO priced at $40 per share, giving the company a fully diluted valuation of $23 billion. The stock closed flat on the first day at $40, then surged more than 250% over the following months, reaching near $70 billion in market capitalization by June 2025.

What is CoreWeave's stock symbol?

CoreWeave trades on the Nasdaq stock exchange under the ticker symbol CRWV. It went public on March 28, 2025 at $40 per share. The company's largest shareholder as of the IPO was Magnetar Capital at 34.5% of Class A shares, followed by Fidelity at 7.6% and NVIDIA at 6%.

How much does CoreWeave charge for H100 GPUs?

CoreWeave's on-demand price for one NVIDIA H100 PCIe GPU is $4.25 per hour as of April 2025. A full 8-GPU H100 HGX node costs approximately $49.24 per hour in total. For comparison, AWS charges approximately $12.29/hr per H100 and Google Cloud charges $6.98/hr. Lambda Labs charges $3.32/hr. CoreWeave is cheaper than the major hyperscalers but more expensive than smaller GPU rental platforms.

Who are CoreWeave's biggest customers?

Microsoft is CoreWeave's largest customer, contributing approximately two-thirds of CoreWeave's $1.92 billion revenue in 2024. The top two customers combined accounted for 77% of revenue, according to CoreWeave's S-1 filing. OpenAI, which Microsoft has invested heavily in, also uses CoreWeave infrastructure for AI training workloads. This customer concentration is one of the most frequently cited financial risks in CoreWeave's public disclosures.

Is CoreWeave profitable?

No. CoreWeave posted a net loss of $863 million in 2024 alongside $1.92 billion in revenue. The company carries more than $10 billion in total debt financing, used primarily to purchase NVIDIA GPU hardware and build data center infrastructure. Revenue grew 738% year over year from $229 million in 2023 to $1.92 billion in 2024, but the capital intensity of the GPU cloud business means losses continue while the infrastructure investment is repaid over time.

How is CoreWeave different from AWS, Azure, or Google Cloud?

CoreWeave is a GPU-only cloud purpose-built for AI workloads. AWS, Azure, and Google Cloud offer hundreds of services across compute, storage, databases, networking, and AI tools. CoreWeave offers accelerated GPU compute and little else. The practical difference: CoreWeave is 30 to 60% cheaper than the hyperscalers for NVIDIA H100 and H200 GPU rental, can provision large clusters faster, and is optimized specifically for AI training and inference workloads. The tradeoff is no managed databases, no serverless functions, no global CDN, and no integration with enterprise software ecosystems.

Who founded CoreWeave?

CoreWeave was founded in 2017 by Michael Intrator (CEO), Brian Venturo (CTO), Brannin McBee, and Peter Salanki under the original name Atlantic Crypto. The founders pivoted from Ethereum mining to AI cloud services in 2019 after the crypto crash, repurposing their existing NVIDIA GPU fleet for machine learning workloads. Michael Intrator leads the company as CEO, with Nitin Agrawal (formerly of Google) serving as CFO as of 2024.

Related Articles

Cloud GPU Providers Compared: Pricing, Speed, and Which to Use in 2026

12 min read

NVIDIA H100 GPU: Full Specs, Price, and Cloud Rates for 2026

11 min read

NVIDIA A100 GPU: Specs, Price, and Performance in 2026

12 min read

What Is a Hyperscaler? Hyperscale Data Centers Explained

9 min read