Edge AI Explained: How It Works and Why Cloud Cannot Match It

Key Numbers

Key Takeaways

- 1Edge AI runs machine learning inference directly on local devices such as cameras, sensors, and embedded chips, without sending data to a cloud server. Edge inference typically completes in under 10 milliseconds; a cloud round-trip takes 50-200ms depending on network conditions.

- 2The edge AI market was valued at approximately $20-24B in 2024 and is projected to reach $47.6B in 2026, growing at roughly 30% per year (Fortune Business Insights, 2025). NVIDIA, Google, Intel, AWS, and Microsoft all have dedicated edge AI hardware and software platforms.

- 3Edge AI is most critical where cloud connectivity is unavailable or where raw data volume would overwhelm network bandwidth. An autonomous vehicle generates 1-20 TB of sensor data per hour; transmitting all of it to the cloud for inference is not feasible at the costs or latency that real-time driving requires.

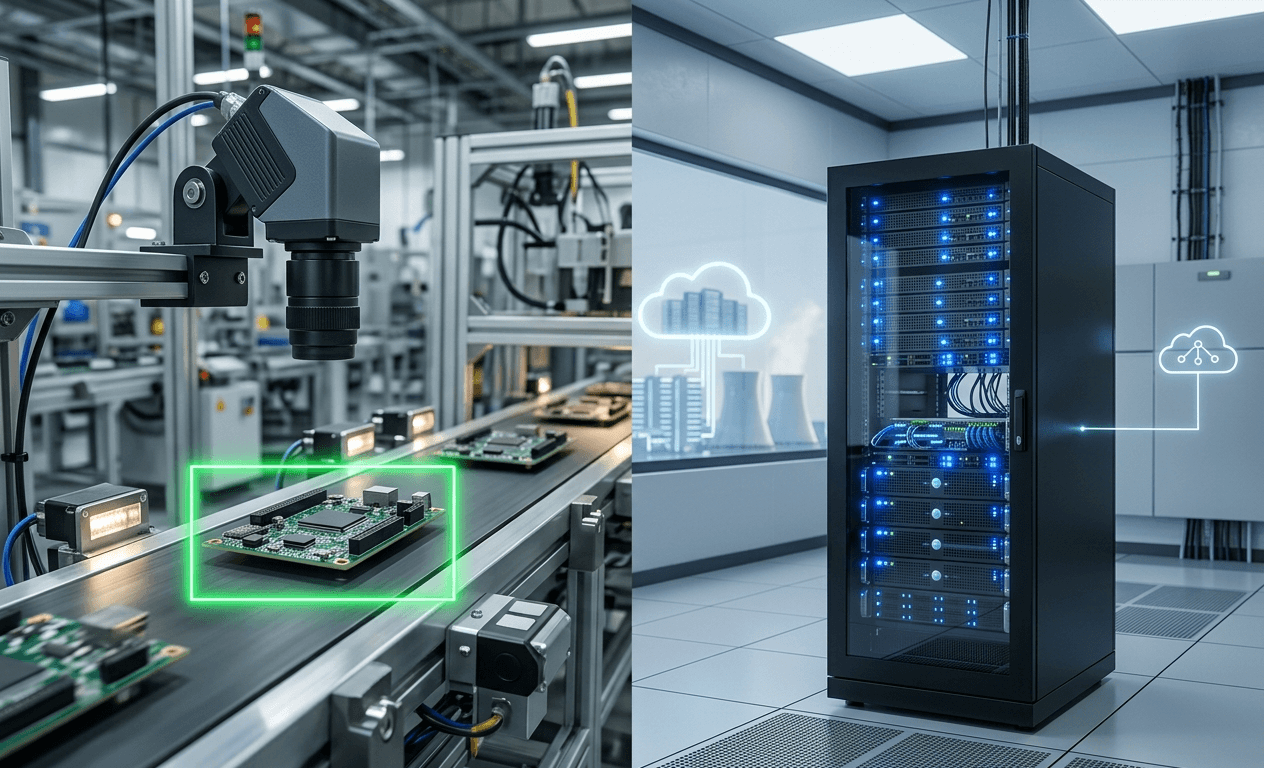

Edge AI runs machine learning models on local devices rather than sending data to a remote cloud server for processing. The device makes the inference decision itself, in milliseconds, using an onboard chip. When a factory camera detects a defective component on a conveyor belt, that decision happens on the camera. When a self-driving vehicle brakes for a pedestrian, that decision happens on the vehicle's onboard compute unit. Neither can wait for a cloud round-trip.

The scale of the shift is significant. According to IDC's 2025 edge computing forecast, global spending on edge computing is projected to reach $261B by 2025, growing at a 13.8% compound annual rate. The subset focused specifically on AI inference at the edge is growing faster, at roughly 30% per year.

After reading this guide, you will understand the difference between edge AI and cloud AI, which hardware platforms enable edge inference, and what the real trade-offs are between processing data locally versus sending it to a data center.

In This Article

- 1What Edge AI Is and How It Differs from Cloud AI

- 2Edge AI Hardware: NVIDIA Jetson, Google Edge TPU, and Intel

- 3How Edge AI Works: The Software and Deployment Stack

- 4Edge AI Market Size, Growth, and Investment Trends

- 5Real-World Edge AI Use Cases: Manufacturing, Vehicles, and Healthcare

- 6Edge AI vs Cloud AI: When to Use Each

- 7What Is Next for Edge AI: On-Device LLMs and Physical AI

What Edge AI Is and How It Differs from Cloud AI

Edge AI is the deployment of machine learning models on edge devices for real-time inference. "Edge" refers to the network edge: the physical location closest to where data is generated, whether that is a factory floor, a vehicle, a hospital room, or a smartphone. The model runs on the device itself, not on a server in a data center.

Cloud AI, by contrast, sends the input data (an image, audio clip, or sensor reading) across a network to a remote server, which runs the model and returns the result. The full round-trip, including network transmission, queuing on the server, inference, and return transmission, typically takes 50-200 milliseconds under normal conditions. On a congested or unreliable network, it can take seconds.

That distinction matters in three specific situations:

- Latency-critical decisions: a self-driving car braking for an obstacle cannot wait 200ms for a cloud response. The vehicle must make decisions in under 10ms from sensor input to actuator command.

- Bandwidth-constrained environments: a factory with 200 HD cameras cannot stream raw 4K video from all of them simultaneously. Edge inference on each camera reduces the outbound data stream from gigabytes per second to kilobytes (only alerts and metadata, not raw video).

- Connectivity-unavailable scenarios: a drone mapping a remote area, an agricultural sensor in a field without cell coverage, or a submarine cable monitoring system all need to function without network access.

Edge computing is the broader infrastructure layer: servers, gateways, and compute nodes located at or near the network edge. Edge AI is specifically the subset of workloads running machine learning models on that infrastructure. The two terms are often used interchangeably but are not identical. For background on how cloud data centers handle the workloads that are not suitable for edge processing, see our guide to AI data centers explained.

Edge AI Hardware: NVIDIA Jetson, Google Edge TPU, and Intel

Every edge AI deployment depends on a hardware platform that can run neural network inference under tight power and size constraints. The major platforms differ in compute power, power consumption, price, and software ecosystem.

| Platform | Manufacturer | Compute | Power | Price | Best For |

|---|---|---|---|---|---|

| Jetson Orin Nano | NVIDIA | 20-40 TOPS | 5-15W | $149-499 | Robotics, drones, small cameras |

| Jetson AGX Orin | NVIDIA | 200-275 TOPS | 15-60W | $499-1,099 | Autonomous vehicles, industrial AI |

| Edge TPU (Coral) | 4 TOPS | 2W | $30-130 (module/board) | Low-power vision tasks, IoT sensors | |

| OpenVINO toolkit | Intel | Varies (CPU/GPU/VPU) | Varies | Software only | Optimizing models for Intel hardware |

| Snapdragon 8 Gen 3 | Qualcomm | 45 TOPS (NPU) | ~5W | SoC in phones | Smartphone AI, on-device LLM inference |

Sources: NVIDIA product specifications 2025; Google Coral documentation; Qualcomm product brief 2024.

NVIDIA's Jetson line is the dominant platform for industrial and automotive edge AI. The Jetson AGX Orin, at up to 275 TOPS (tera-operations per second), can run models like YOLOv8 for real-time object detection at 60+ frames per second. At 60W maximum power draw, it consumes roughly one-tenth the power of a single NVIDIA H100 GPU running the same model, at the cost of lower throughput.

Google's Edge TPU targets the lowest-power end of the market. At 4 TOPS and 2 watts, it is designed for always-on vision tasks in battery-powered or passively-cooled devices. It runs TensorFlow Lite models only, which limits flexibility but keeps the hardware simple and cheap.

Intel's OpenVINO is a software framework rather than a hardware platform. It optimizes trained neural networks for execution on Intel CPUs, integrated GPUs, and Movidius Vision Processing Units (VPUs), which are found in industrial cameras and smart gateways. OpenVINO's strength is compatibility: it supports models from TensorFlow, PyTorch, ONNX, and other frameworks.

For a deeper look at how AI accelerator chips work and what TOPS means as a performance metric, see our AI accelerator explainer.

How Edge AI Works: The Software and Deployment Stack

Running a machine learning model at the edge requires four steps that differ significantly from cloud deployment.

1. Model training: Training still happens in the cloud or on a GPU workstation. Edge devices lack the compute and memory to train large models from scratch. A model trained on thousands of images in the cloud gets compressed and deployed to edge hardware.

2. Model optimization: A model trained in PyTorch or TensorFlow typically runs too slowly and uses too much memory for edge hardware. Quantization (reducing weight precision from 32-bit float to 8-bit integer) reduces model size by 4x and speeds up inference 2-4x with minimal accuracy loss. Pruning removes low-importance parameters. NVIDIA's TensorRT, Google's TensorFlow Lite, and Intel's OpenVINO each have their own optimization pipelines for their hardware.

3. Edge deployment: The optimized model is deployed to the edge device via a management platform. AWS Greengrass, Microsoft Azure IoT Edge, and NVIDIA Metropolis each provide frameworks for pushing model updates to fleets of edge devices, monitoring inference performance, and rolling back failed deployments.

4. Inference loop: The edge device runs the model continuously on incoming sensor data. Results are acted on locally (trigger an alarm, adjust a machine setting, apply brakes) and summarized data (not raw inputs) is sent to the cloud for logging, analytics, and retraining.

The management platforms matter more than they appear. A company with 10,000 smart cameras cannot manually update models on each device. AWS Greengrass and Azure IoT Edge solve the fleet management problem: a new model version can be staged, tested on 1% of devices, then rolled out to all devices without human intervention at each site.

Edge AI Market Size, Growth, and Investment Trends

The edge AI market was valued at approximately $20-24B in 2024 and is projected to reach $35.8B in 2025 and $47.6B in 2026, according to Fortune Business Insights. The compound annual growth rate through 2034 is approximately 30%, driven by industrial automation, autonomous vehicle deployment, and the expansion of AI-capable smartphones.

The broader edge computing market, which includes all non-AI workloads processed at the network edge, is substantially larger. According to IDC's global edge computing forecast, total edge computing spending reaches $261B by 2025 at a 13.8% CAGR. AI inference workloads represent the fastest-growing component within that total.

North America leads with approximately 40% of the global edge AI market in 2024 (Grand View Research, 2024). Asia-Pacific is the fastest-growing region, driven by manufacturing automation in China, South Korea, and Japan.

The Number Most Guides Don't Show

An autonomous vehicle generates approximately 1-20 TB of raw sensor data per hour from cameras, LiDAR, radar, and ultrasonic sensors. AWS data egress costs $0.09 per GB. At the low end of 1 TB per hour, sending that data to the cloud for inference would cost $92.16 per vehicle per hour in egress fees alone, before any compute costs. For a commercial robotaxi fleet of 10,000 vehicles operating 10 hours per day, that is $9.2M per day, or $3.36B per year, just in data transfer.

Edge inference eliminates that cost by making the decision on the vehicle. The cloud receives only the result (obstacle detected at position X at time T), not the 20 TB of raw sensor data that generated it. This is why autonomous vehicle companies do not debate edge versus cloud inference. The economics make the decision for them.

The same logic, at smaller scale, applies to smart factory cameras, retail shelf-monitoring systems, and healthcare diagnostic devices. The question is not whether edge inference is better in theory. It is whether the latency or bandwidth constraints make cloud inference impractical in practice.

Real-World Edge AI Use Cases: Manufacturing, Vehicles, and Healthcare

The three largest deployment categories for edge AI as of 2025 are industrial manufacturing, autonomous and semi-autonomous vehicles, and smart cameras for security and retail.

Manufacturing quality control is the most commercially mature segment. NVIDIA's Metropolis platform, combined with Jetson hardware, powers defect detection systems at factories for companies including BMW, Siemens, and Foxconn. A typical installation uses smart cameras running convolutional neural networks (CNNs) to inspect parts at 60-120 frames per second, flagging defects that human inspectors miss at production line speeds. The latency requirement is strict: a defect on a moving assembly line must be detected and flagged before the part moves to the next station, typically within 100ms from capture to alarm.

Autonomous vehicles represent the highest-compute edge AI application. NVIDIA's Drive AGX Orin platform, used by more than 25 automotive OEMs as of 2025, delivers 254 TOPS for perception, planning, and control tasks. Tesla uses its own in-house designed Full Self-Driving (FSD) chip at 144 TOPS per chip, with two chips per vehicle for redundancy. The vehicle must process inputs from 8 cameras, radar, and ultrasonic sensors simultaneously and produce driving decisions at 10-40ms cycle times.

Healthcare diagnostic devices are an emerging segment. Portable ultrasound devices from GE Healthcare and Butterfly Network run AI models for real-time image enhancement and anomaly flagging directly on the handheld probe. This enables AI-assisted diagnosis in locations without reliable internet access, including rural clinics, field hospitals, and remote sites.

Smart cameras for retail analytics, building security, and traffic management represent the highest-volume segment by device count. Hikvision, Axis Communications, and Hanwha Vision all ship cameras with onboard NPUs (neural processing units) capable of running person detection, face recognition, and license plate reading locally, without streaming video to a cloud server.

Edge AI vs Cloud AI: When to Use Each

The choice between edge inference and cloud inference depends on four variables: latency requirement, data volume, connectivity reliability, and privacy constraints.

| Factor | Edge AI | Cloud AI |

|---|---|---|

| Inference latency | Under 10ms possible | 50-200ms typical round-trip |

| Data volume | Reduces bandwidth: send results, not raw data | Requires raw data upload |

| Connectivity | Works offline | Requires reliable network |

| Privacy | Data stays on device | Data leaves the facility |

| Model complexity | Limited by device compute | Unlimited (scale horizontally) |

| Cost at scale | Higher upfront hardware, near-zero per-inference | Low upfront, high per-inference at volume |

| Model updates | Requires managed deployment system | Instant server-side update |

The correct answer for most production systems is not purely one or the other. The standard architecture is a hybrid: edge devices handle time-critical inference (object detection, anomaly flagging, real-time control), while the cloud handles model training on aggregated data, long-range analytics, and business intelligence.

For example, a smart factory might use edge cameras to detect defects in real time, but send anonymised metadata (defect type, location, timestamp) to the cloud for trend analysis and to generate new training data for the next model version. The raw video never leaves the factory. The insights reach company leadership dashboards via the cloud.

The key question to ask before choosing: can the decision wait 200 milliseconds? If yes, cloud inference is simpler and more flexible. If no, the workload belongs at the edge.

For teams evaluating which types of AI workloads belong in the cloud versus at the edge, our guide to AI training vs inference explains the compute requirements for each and which hardware is optimized for each workload type.

What Is Next for Edge AI: On-Device LLMs and Physical AI

The most significant shift underway in edge AI as of 2025-2026 is the move toward running small language models directly on endpoint devices, without any cloud connection.

Qualcomm's Snapdragon 8 Gen 3 mobile processor includes a 45-TOPS NPU capable of running 7-billion-parameter language models locally on a smartphone. Samsung Galaxy S24 ships with on-device AI features including summarization and translation that run entirely on the Snapdragon chip. Apple's Neural Engine in the M4 chip series enables on-device inference for models up to approximately 3 billion parameters.

NVIDIA's "physical AI" concept, outlined by CEO Jensen Huang at GTC 2024, describes the next generation of edge AI as systems that understand and interact with the physical world: robots, autonomous vehicles, and industrial machines that respond to their environment in real time. The NVIDIA Jetson Thor platform, targeting 2025-2026 release, delivers 800 TOPS specifically for humanoid robotics and advanced autonomous vehicle applications.

Three trends to watch in 2026 and beyond:

1. Model compression: quantization and distillation techniques continue improving, bringing larger model capabilities to smaller devices. GPT-4-class reasoning may be running on edge hardware within 3-5 years.

2. Federated learning: training model updates on edge devices using local data, then aggregating updates to a central model without transmitting raw data. This addresses both bandwidth and privacy constraints simultaneously.

3. AI at the network edge (telco edge): 5G base stations running AI inference for applications like real-time translation, AR overlay, and industrial control, reducing latency below what device-only processing can achieve for compute-heavy models.

Frequently Asked Questions

What is the difference between edge AI and cloud AI?

Edge AI runs machine learning inference directly on a local device (camera, vehicle, sensor, phone) without sending data to a remote server. Cloud AI sends input data to a data center for inference. Edge AI delivers lower latency (under 10ms versus 50-200ms for cloud) and works without internet connectivity, but is limited by the compute available on the device.

What hardware is used for edge AI?

Common edge AI hardware includes NVIDIA Jetson modules (20-275 TOPS, $149-1,099), Google Coral Edge TPU (4 TOPS, 2W, $30-130), Intel Movidius VPUs for camera and gateway applications, and Qualcomm Snapdragon SoCs for smartphones (45 TOPS). The right platform depends on the inference speed, power budget, and model size requirements of the application.

How large is the edge AI market?

The edge AI market was valued at approximately $20-24B in 2024 and is projected to reach $47.6B in 2026, growing at roughly 30% per year through 2034 (Fortune Business Insights, 2025). The broader edge computing market, covering all workloads at the network edge, is projected at $261B by 2025 (IDC, 2025).

Can edge AI run large language models?

Small language models up to about 7 billion parameters can run on current high-end edge hardware. Qualcomm Snapdragon 8 Gen 3 (45 TOPS NPU) and Apple M4 Neural Engine both support on-device inference for 3-7B parameter models. GPT-4-class models (estimated 1.76 trillion parameters) cannot currently run at the edge due to memory and compute constraints.

What are the main use cases for edge AI?

The three largest edge AI deployment categories are: industrial manufacturing quality control (defect detection cameras at 60-120 fps), autonomous vehicles (254-275 TOPS for real-time perception and control), and smart cameras for security, retail, and traffic management. Healthcare diagnostic devices and on-device smartphone AI are fast-growing segments.

Does edge AI require internet connectivity?

No. Edge AI runs inference locally on the device, which means it functions without internet or network access. This is one of its key advantages for applications in remote environments, manufacturing floors with air-gapped networks, and moving vehicles. Cloud connectivity is typically used for model updates, logging, and sending summarised results to analytics platforms, but is not required for real-time inference.

What is the difference between edge computing and edge AI?

Edge computing is the broader term for any data processing that happens at or near the network edge, close to where data is generated. Edge AI is specifically the subset of edge computing workloads that involve machine learning inference. All edge AI is a form of edge computing, but edge computing also includes data filtering, local storage, protocol translation, and other tasks that do not involve AI.