Open Source LLMs: The Best Models You Can Run Yourself in 2026

Key Numbers

Key Takeaways

- 1An open source LLM is a model with publicly released weights anyone can download and run. Most popular models including Llama 4 and Gemma 3 are open-weight, not true open source: weights are downloadable but licenses restrict certain uses. Qwen 3 and Mistral Small 3.1 use Apache 2.0, the most permissive commercially usable license available.

- 2Running Mistral 7B locally requires 8-12 GB VRAM at 4-bit quantization, achievable on a gaming GPU like the RTX 4060. Llama 4 Scout requires 55 GB VRAM at 4-bit, demanding server-class hardware or multi-GPU setups. Most consumer deployments run 7B-27B models on a single RTX 4090 with 24 GB VRAM.

- 3The performance gap between open and proprietary models narrowed sharply in 2025-2026. DeepSeek V3 and Llama 4 Maverick score 88-90% on MMLU, matching GPT-4o. The main remaining advantages of proprietary models are multimodal consistency, agentic tool use reliability, and more thorough safety alignment.

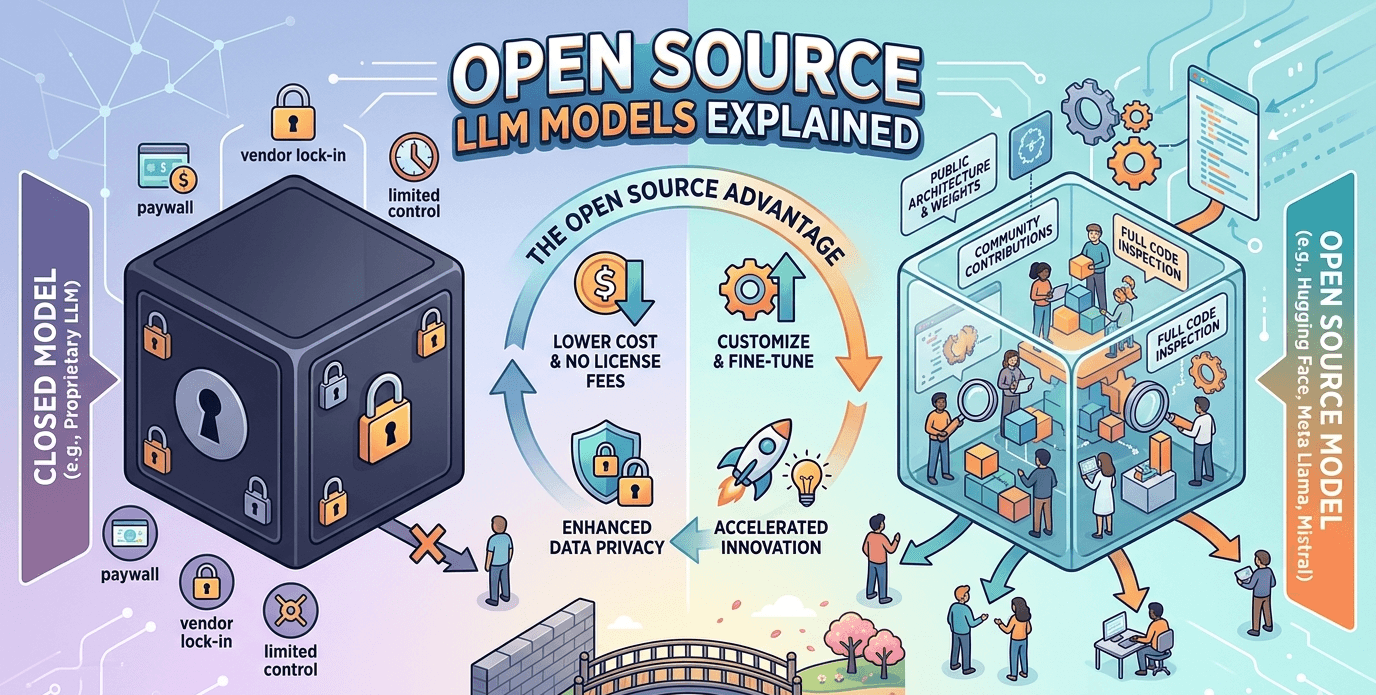

Open source LLMs are large language models whose weights are publicly released, letting anyone download, run, fine-tune, and deploy them without paying per-query API fees. As of May 2026, the leading examples are Meta's Llama 4 family, Google's Gemma 3, Mistral Small 3.1, DeepSeek V3, Alibaba's Qwen 3, and Microsoft's Phi-4.

One distinction matters. Most popular "open source" LLMs are more precisely open-weight models: the weights are downloadable, but the license restricts certain commercial uses or redistribution of fine-tuned variants. Truly open-source models under Apache 2.0 or MIT licenses are fewer. Qwen 3 and Mistral Small 3.1 qualify. Llama 4 and Gemma 3 do not, even though both are freely downloadable and commercially usable under their respective custom licenses.

The performance gap between open and proprietary models has largely closed at the text-only benchmark level. DeepSeek V3 and Llama 4 Maverick both score 88-90% on MMLU, matching GPT-4o. For teams in healthcare, legal, or financial services where sending data to a third-party API raises compliance issues, the open-weight models available today are a viable production choice.

This guide covers the best open source and open-weight LLMs in 2026, their hardware requirements at different quantization levels, licensing terms for commercial use, and which professions are adopting self-hosted models most aggressively.

In This Article

- 1What Are Open Source LLMs? The Open-Weight Distinction

- 2Open Source vs Proprietary LLMs: When Each Makes Sense

- 3The Best Open Source LLMs in 2026: Full Comparison Table

- 4How Much GPU Do You Actually Need to Run Open Source LLMs?

- 5Which Open Source LLMs Allow Commercial Use? The Full Licensing Guide

- 6Who Uses Open Source LLMs? Use Cases Across Industries and Professions

- 7Where Proprietary Models Still Win: Limitations of Open Source LLMs

What Are Open Source LLMs? The Open-Weight Distinction

An open source LLM is a large language model whose weights, the billions of numerical parameters learned during training, are made publicly available for download, use, and modification. The practical difference from a proprietary model like GPT-4o or Claude 3.7 is access: no one can download GPT-4o's weights or run it on their own hardware. With an open source or open-weight model, you can.

The terminology split matters for licensing. The Open Source Initiative defines open source software as code carrying a license that permits use, modification, and redistribution with minimal restrictions. Most prominent AI models described as "open source" do not fully meet this standard. Meta's Llama 4, Google's Gemma 3, and DeepSeek's V3 are open-weight models: anyone can download and run the weights, but licenses restrict specific uses or redistribution of modified versions.

The community uses three practical categories:

- Fully open source: weights, training code, and data released under permissive licenses (Apache 2.0, MIT). Examples: Qwen 3 (Alibaba), Mistral Small 3.1 (Mistral AI). No restrictions on commercial use or redistribution.

- Open-weight: weights downloadable but license imposes conditions on redistribution, commercial use thresholds, or use for training competing models. Examples: Llama 4 (Meta custom license), Gemma 3 (Google Gemma license), DeepSeek V3. These are still free to use commercially within the license terms.

- Research only: weights available but commercial use requires separate agreements. Less common among major 2026 models.

Dense vs Mixture-of-Experts Architecture

The other major distinction in 2026's open-weight landscape is architecture. Dense models activate all parameters for every token: Llama 3.3 70B, Gemma 3 27B, and Qwen 3 72B are all dense. Mixture-of-experts (MoE) models activate only a fraction of their total parameters per token. Llama 4 Scout (109B total, 17B active) and DeepSeek V3 (671B total, 37B active) are MoE models.

The practical implication: MoE models achieve benchmark performance comparable to much larger dense models at lower inference compute cost, because only a fraction of parameters are computed per forward pass. They still require holding the full weight file in VRAM, however, which means MoE models carry high memory requirements despite their lower active parameter count. For a deeper explanation of how large language models are built and trained, see our guide to what large language models are and how they work.

Open Source vs Proprietary LLMs: When Each Makes Sense

Open source and proprietary LLMs are not substitutes in every use case. The right choice depends on data privacy requirements, token volume economics, and how much model customization a team needs.

| Factor | Open Source / Open-Weight | Proprietary (GPT-4o, Claude 3.7) |

|---|---|---|

| Cost | GPU hardware upfront, near-zero per-query ongoing | Pay per million tokens ($2-15 per million output tokens) |

| Data privacy | All data stays on your servers | Data sent to third-party API |

| Customization | Full fine-tuning on your data possible | Limited via system prompts or vendor fine-tune programs |

| Performance (2026) | Top models match GPT-4o on MMLU and coding benchmarks | Still ahead on multimodal, complex tool use, alignment |

| Updates | Community releases, some lag behind frontier | Continuous API improvements, no version management |

| Support | Community forums, no guaranteed SLA | Commercial SLA available |

| Compliance | On-prem deployment for HIPAA, GDPR, SOC2 | Depends on vendor DPA and data residency options |

| Setup complexity | High: GPU procurement, serving infrastructure | Low: API key and HTTP request |

The economic case for open source shifts at scale. Paying $10 per million output tokens at 10 million output tokens per day costs $100,000 per month in API fees. An H100 80 GB server running DeepSeek V3 in production delivers comparable benchmark quality at roughly $25,000 capital cost amortized over three years, making self-hosting significantly cheaper for high-throughput deployments.

"Open source AI will be the leading open-source software ecosystem in the world." (Mark Zuckerberg, Meta CEO, 2024)

Mistral AI's co-founder Guillaume Lample has framed open-weight releases as the mechanism for "AI sovereignty," the ability for companies and governments to run AI on infrastructure entirely within a legal jurisdiction without dependency on US-based API providers. That argument resonates particularly in the EU where data residency requirements under GDPR create legal risk for certain cloud API workflows.

The Best Open Source LLMs in 2026: Full Comparison Table

The following models represent the most capable and widely used open-weight LLMs as of May 2026. MMLU scores are approximate ranges and vary by evaluation protocol. All models listed allow commercial use within their respective license terms.

| Model | Developer | Architecture | Params (total / active) | Context window | License | MMLU |

|---|---|---|---|---|---|---|

| Llama 4 Maverick | Meta | MoE | 400B / 17B | Very long | Meta Llama | 88-90% |

| DeepSeek V3 | DeepSeek | MoE | 671B / 37B | Long context | Open-weight | 88-90% |

| Qwen 3 72B | Alibaba | Dense | 72B | Long context | Apache 2.0 | 86-89% |

| Llama 4 Scout | Meta | MoE | 109B / 17B | Up to 10M tokens | Meta Llama | 86-88% |

| Llama 3.3 70B | Meta | Dense | 70B | 128K tokens | Meta Llama | 86-88% |

| Phi-4 | Microsoft | Dense | 14B | Long context | MIT-style | 84-87% |

| Gemma 3 27B | Google DeepMind | Dense | 27B | 128K+ tokens | Gemma license | 84-86% |

| Mistral Small 3.1 | Mistral AI | Dense | Small class | Long context | Apache 2.0 | 82-85% |

Model Profiles

Llama 4 Scout and Maverick (Meta, April 2025)

Meta's Llama 4 generation introduced the first natively multimodal and mixture-of-experts Llama models. Scout (109B total, 17B active) targets long-context tasks and on-device scenarios requiring extended memory, with a context window reaching 10 million tokens in certain configurations. Maverick (400B total, 17B active) is at the frontier of open-weight performance. Both require significant VRAM even at 4-bit quantization, putting them in the data-center class rather than consumer GPU territory. Both are available on Hugging Face and through managed open-model platforms like Featherless AI and Fireworks.

DeepSeek V3 (DeepSeek, 2024-2025)

DeepSeek V3 uses a 671B total parameter MoE architecture with only 37B active per token. This design achieves frontier-level coding and mathematics performance at lower inference compute than an equivalent dense model of similar benchmark quality. It scores 88-90% on MMLU and is one of the two strongest open-weight models available in 2026 alongside Llama 4 Maverick. The license permits commercial use with conditions. For a practical guide to running DeepSeek models locally, see our setup guide for running DeepSeek R1 locally with Ollama.

Qwen 3 72B (Alibaba, 2026)

Alibaba's Qwen 3 family uses Apache 2.0 licensing, making it the largest fully permissive-license LLM family in the 2026 open ecosystem. Teams that need unrestricted commercial use and redistribution rights without reading through a custom license find Qwen 3 the simplest starting point. The model has strong multilingual coverage including Chinese, Japanese, Arabic, and European languages. A vision variant, Qwen 3 VL, extends the model to image understanding.

Phi-4 (Microsoft, 2025)

At 14B dense parameters, Phi-4 scores 84-87% on MMLU, matching models twice its size. Microsoft trained it on carefully curated synthetic and educational data rather than raw web scrapes, which produces strong reasoning and coding performance at a scale that fits on a single RTX 4090 at 4-bit quantization. It is a practical choice for developers and small teams without dedicated GPU server infrastructure.

Gemma 3 27B (Google DeepMind, 2025)

Gemma 3 comes in 2B, 9B, and 27B variants. The 27B model has a useful niche: at 4-bit quantization, it needs 14-16 GB VRAM, which runs on a single RTX 4080 Super or similar 16 GB consumer card. Google emphasizes safety tooling and tight integration with Google Cloud as Gemma's differentiators. The 2B variant runs on most smartphones and edge hardware.

Mistral Small 3.1 (Mistral AI, 2025-2026)

Mistral Small 3.1 uses Apache 2.0 licensing, making it fully permissive. Mistral AI is headquartered in France and has consistently pushed EU data sovereignty as a positioning argument, which makes its open-weight models relevant for EU-based teams navigating GDPR and AI Act compliance. Mistral models have a track record of strong coding performance relative to their size.

How Much GPU Do You Actually Need to Run Open Source LLMs?

Hardware is where most guides leave you guessing. What you actually need depends on which model, what quantization level, and whether you are running single-user inference or a team-scale deployment.

VRAM Requirements by Model and Quantization

| Model | 4-bit (Q4) VRAM | 8-bit (Q8) VRAM | Practical GPU |

|---|---|---|---|

| Llama 4 Maverick | ~200 GB | ~400 GB | Multiple H100 80 GB only |

| DeepSeek V3 | ~140 GB | ~280 GB | Multiple A100/H100 80 GB |

| Llama 4 Scout | ~55 GB | ~110 GB | Multi-GPU server class |

| Qwen 3 72B / Llama 3.3 70B | ~35-40 GB | ~70-80 GB | 2x RTX 4090 or A100 40 GB |

| Gemma 3 27B | ~14-16 GB | ~27-30 GB | Single RTX 4080 (16 GB) |

| Phi-4 14B | ~7-9 GB | ~14-16 GB | Single RTX 3080 (10 GB) |

| Mistral 7B | ~4-5 GB | ~7-8 GB | Single RTX 3060 (8-12 GB) |

| Phi-4 mini / Gemma 3 2B | ~2-4 GB | ~4-6 GB | Entry GPU or CPU run |

Quantization reduces precision from 16-bit floating point to 4-bit or 8-bit integers. A 4-bit quantized model uses roughly half the VRAM of an 8-bit model and about a quarter of a full 16-bit precision model. Quality loss at 4-bit is generally minor for most text tasks. Tools including Ollama, llama.cpp, and vLLM handle quantization automatically at download time.

GPU Price Guide for Local LLM Deployment (2026)

| GPU | VRAM | Approx price (USD) | Best for |

|---|---|---|---|

| RTX 3060 12 GB | 12 GB | $200-350 used | Mistral 7B, Phi-4 mini |

| RTX 4060 Ti 16 GB | 16 GB | $380-500 new | Phi-4 14B, Gemma 3 9B |

| RTX 4080 Super | 16 GB | $900-1,100 new | Gemma 3 27B at Q4 |

| RTX 4090 | 24 GB | $1,500-2,200 new | Gemma 3 27B, Phi-4 comfortably |

| RTX 5090 | 32 GB | $2,000-3,000 new | Qwen 3 32B at Q4 |

| A100 80 GB | 80 GB | $8,000-15,000 | Llama 4 Scout with headroom |

| H100 80 GB | 80 GB | $25,000-35,000 | Production inference large MoE |

The Number Most Guides Don't Show

Running Llama 4 Scout at 4-bit quantization requires approximately 55 GB VRAM for weights alone. Add KV cache for a 32,000-token context at batch size 8, and total VRAM demand reaches 70-80 GB. That requires two A100 80 GB cards at a combined purchase cost of $16,000-30,000.

The cloud alternative: renting a single H100 instance on Lambda Labs or CoreWeave costs roughly $2-3 per hour. At eight hours per day of active inference, that is $48-72 per day, or $12,000-21,000 per year. Owning the equivalent hardware runs $40,000-70,000 amortized over three years, making cloud rental more economical for low-to-medium volume teams. For high-throughput production deployments generating millions of tokens per day, owned hardware breaks even within 12-18 months.

For teams using consumer hardware, the practical ceiling is around 27B dense models (Gemma 3 27B) or small-to-medium MoE models on a single RTX 4090. For a complete setup guide to running these models with a browser-based interface, see our guide to using Open WebUI with Ollama locally.

Which Open Source LLMs Allow Commercial Use? The Full Licensing Guide

"Free to download" and "free for commercial use" are not the same thing, and most guides skip over this. Every major open-weight model carries specific license conditions. Violating them in revenue-generating deployments creates legal exposure.

| Model family | License | Commercial use | Fine-tune and redistribute? | Key restriction |

|---|---|---|---|---|

| Qwen 3 (Alibaba) | Apache 2.0 | Yes, unrestricted | Yes | Attribution required |

| Mistral Small 3.1 | Apache 2.0 | Yes, unrestricted | Yes | Verify specific release — not all Mistral models use Apache 2.0 |

| Phi-4 mini (Microsoft) | MIT-style | Yes | Yes | Verify specific model card |

| Llama 3.x / Llama 4 (Meta) | Meta Llama custom | Yes, under 700M MAU | Yes, with attribution | Cannot use to train competing LLMs; "Built with Llama" disclosure required |

| Gemma 3 (Google) | Gemma license | Yes, compliant uses | Yes, with conditions | Cannot use Gemma to build competing AI systems |

| DeepSeek V3 (DeepSeek) | DeepSeek open-weight | Yes, with conditions | Yes | Verify repository license; terms vary by release |

| Falcon 3 (Technology Innovation Institute) | Falcon license | Yes | Yes | Check specific variant |

Practical Summary

For startups or individual developers building commercial products, start with Qwen 3 or Mistral Small 3.1 under Apache 2.0. No usage caps, no attribution beyond standard open-source norms, no restrictions on fine-tuning and redistribution.

For enterprises that want maximum performance and are comfortable with a custom license, Llama 4 (Meta Llama license) permits commercial use for products with under 700 million monthly active users, covering virtually every business application except platform-scale consumer products.

For EU-based teams where data sovereignty under GDPR and the EU AI Act matters, Mistral AI's French headquarters and its Apache 2.0 licensed models make it the natural choice. Arthur Mensch, Mistral AI's CEO, has described open-weight releases as enabling "AI sovereignty and customization that closed APIs cannot provide," positioning local deployment as a compliance strategy as much as a cost one.

"Openness increases scrutiny and therefore trust. It lowers barriers for smaller players and enables customization for specific use cases that closed models cannot support." (Arthur Mensch, CEO Mistral AI, 2025)

Who Uses Open Source LLMs? Use Cases Across Industries and Professions

Teams that self-host open-weight models rather than use a commercial API almost always have the same reason: data that cannot leave a controlled environment. The industries moving fastest on this in 2025 and 2026 share strict data handling requirements, high token volumes, or both.

Software Developers and Engineering Teams

Developers are the largest user group for local open-weight LLMs. The primary driver is cost at volume. A team running AI-assisted code review across a large codebase generates millions of tokens per day. At commercial API rates of $5-15 per million output tokens, that becomes $150,000-450,000 per month for a medium-sized engineering team. Running Phi-4 or DeepSeek V3 on owned GPU infrastructure reduces this to electricity and hardware amortization, typically 80-90% cheaper at scale.

Code completion and code review are tasks where open-weight models perform particularly well relative to their size. Phi-4 and Qwen 3 score competitively on HumanEval and similar coding benchmarks against models two to three times their parameter count. Tools like Ollama and Continue (a VS Code extension) integrate directly with local models for IDE-based assistance. For setup instructions, see our guide to running Ollama locally on your machine.

Healthcare Professionals and Medical Organizations

Healthcare has the most direct argument for on-premise LLM deployment. Patient data under HIPAA in the US and GDPR in the EU cannot be sent to a third-party API without explicit patient authorization and a Business Associate Agreement with the vendor. OpenAI, Anthropic, and Google all offer enterprise agreements with HIPAA BAAs, but deploying an open-weight model on in-house infrastructure eliminates the third-party compliance dependency entirely.

Common healthcare applications include clinical documentation assistance, medical literature summarization, discharge summary drafting, and insurance prior authorization language generation. Gemma 3 and Llama 3.3 70B are frequently deployed in healthcare settings due to their safety fine-tuning and strong performance on clinical language tasks. Radiologists, nurses, and hospital administrators using AI workflows that stay within institutional infrastructure represent one of the fastest-growing adoption segments for open-weight models.

Legal Professionals

Law firms and in-house legal teams face constraints similar to healthcare. Client confidentiality, attorney-client privilege, and data sovereignty rules make sending case documents or contract drafts to a commercial API legally problematic in many jurisdictions. UK and EU bar associations have issued guidance cautioning against uploading client information to non-approved AI services.

Self-hosted LLMs behind a firm's firewall address this directly. Common legal applications include contract review, clause extraction, due diligence document analysis, and first-draft research memo generation. Lawyers drafting briefs, paralegals processing discovery documents, and compliance officers reviewing policy language all benefit from AI assistance that does not require sharing client information with a third party. The accuracy requirements in legal work also make fine-tuning on firm-specific precedents and style guides more practical with open-weight models than with API-only services.

Financial Services and Banking

Banks, asset managers, and insurance companies operate under regulatory requirements that treat data residency and third-party data sharing with care. In the EU, financial services regulations require that customer data be processed in approved jurisdictions. US banking regulators have issued model risk management guidance requiring firms to document how AI systems process and retain their data.

Open-weight models deployed on-premise enable financial applications including earnings call analysis, financial report summarization, regulatory filing review, and internal knowledge base queries without data leaving institutional infrastructure. Financial analysts using AI to process earnings transcripts, compliance teams reviewing regulatory filings, and risk officers analyzing portfolio documentation all represent active adoption segments. The cost argument also applies: high-volume financial applications like transaction monitoring or customer service routing generate token volumes where self-hosting becomes economically compelling within 12-18 months.

Researchers and Academics

Academic researchers use open-weight models for a reason distinct from the others: the ability to inspect, modify, and experiment with the model itself. Fine-tuning a Llama 3 70B on a specific scientific domain, such as genomics, clinical trials, or materials science, to produce a domain-adapted research assistant is not possible with closed API models. The open-weight model's trainable parameters are the research asset.

Major university AI labs and national laboratories use open-weight models as the foundation for domain-specific research tools. The ability to reproduce fine-tuning exactly, inspect model internals, and publish methodology alongside results is essential for academic publishing in a way that proprietary black-box APIs are not. Gemma 3 and Qwen 3 see particularly strong adoption in research settings due to their permissive licensing and established community fine-tuning toolchains.

Small Businesses and Independent Teams

For small businesses, the economics shift depending on usage volume. A single developer using ChatGPT Plus at $20/month rarely justifies GPU hardware investment. A team of ten using Claude Pro at $20 each per month pays $200/month, which compares against roughly $380-500 for an RTX 4060 Ti 16 GB as a one-time hardware purchase that runs Phi-4 or Mistral 7B indefinitely.

For higher-volume applications, the breakeven arrives sooner. A small e-commerce business using AI for product description generation, customer service, and email drafting can generate 50 million output tokens per month. At $5 per million tokens, that costs $250/month or $3,000 per year in API fees alone. A single RTX 4090 running Gemma 3 27B or Phi-4 handles that volume for a few hundred dollars per year in electricity after the initial hardware purchase. For a practical guide to comparing local model performance against API models for specific tasks, see our comparison guide on choosing the best local LLM models for your hardware.

Where Proprietary Models Still Win: Limitations of Open Source LLMs

Open-weight LLMs match proprietary models on key text benchmarks, but the gap does not close evenly across all tasks. Several areas still favor GPT-4o and Claude.

Agentic Task Reliability

GPT-4o and Claude 3.7 Sonnet outperform open-weight models on complex agentic workflows requiring multi-step reasoning, accurate tool calling across multiple systems, and consistent instruction following over long chains of actions. The gap on isolated text benchmarks like MMLU has narrowed, but in production agentic systems where each step compounds the previous one, proprietary frontier models produce fewer cascading errors. For teams building autonomous agents or workflows where per-step reliability matters, the performance gap is real enough to justify the cost.

Safety and Alignment

Proprietary models go through extensive RLHF, red-teaming, and safety evaluation before release. Open-weight models have safety fine-tuning, but it is less thorough. In practice this shows up in how models handle sensitive topics, whether refusal instructions hold across edge cases, and how often models produce unintended outputs. For consumer-facing applications where guardrails matter, proprietary models are lower risk.

Setup and Maintenance Complexity

Running an open-weight model in production requires a GPU server, a serving framework such as vLLM, Ollama, or llama.cpp, monitoring infrastructure, and ongoing maintenance as new model versions are released. This is a real engineering burden. A commercial API is three lines of code and a billing relationship. For small teams without dedicated infrastructure engineers, that difference in operational complexity has real costs in time and reliability.

Update Velocity

Proprietary models receive continuous improvements through a single API endpoint. Teams using GPT-4o or Claude do not manage model versions; they receive improvements automatically. Open-weight models require intentional upgrades: downloading new weights, testing for regression, and redeploying. The labs training proprietary frontier models are also better resourced and typically improve their models faster than the open-weight ecosystem can match at the frontier edge.

Multimodal Capabilities

Vision, audio, and video understanding in open-weight models remain behind proprietary equivalents as of May 2026. Llama 4 Scout has multimodal capabilities, and Qwen 3 VL extends Qwen to image understanding. For applications requiring complex image analysis, audio transcription with high accuracy, or video understanding, GPT-4o and Gemini 1.5 Pro maintain a meaningful reliability lead.

"The strongest open-weight models in 2026 are approaching frontier proprietary models on several academic benchmarks, but GPT-4o and Claude 3.7 Sonnet generally retain an edge in reliability, multimodal performance, and agentic workflows." (Fireworks AI evaluation review, 2026)

For teams choosing between open-weight and proprietary models, the practical framework is: self-host open-weight models for high-volume text tasks, data-sensitive workflows, and any use case where you need to fine-tune on proprietary data. Use proprietary APIs for low-volume or unpredictable-volume tasks, complex agentic workflows, multimodal applications, and consumer-facing products where guardrail reliability matters most.

Frequently Asked Questions

What is the best open source LLM in 2026?

The best open source LLM in 2026 depends on your hardware and use case. For maximum performance: Llama 4 Maverick (Meta) and DeepSeek V3 score 88-90% on MMLU, matching GPT-4o, but require data-center class hardware. For the best performance on a single 16 GB consumer GPU: Gemma 3 27B scores 84-86% on MMLU and runs comfortably on a single RTX 4080. For maximum performance on modest hardware: Phi-4 (14B) achieves 84-87% MMLU on an RTX 4070 or equivalent 12 GB GPU. For teams that need Apache 2.0 licensing with no commercial restrictions: Qwen 3 72B is the strongest fully permissive option at 86-89% MMLU.

Can I run an open source LLM on my laptop?

Yes, if your laptop has sufficient RAM or a discrete GPU with VRAM. Small models in the 1B-7B range, including Phi-4 mini and Mistral 7B, run on gaming laptops with 8-12 GB VRAM at 4-bit quantization using Ollama. Apple Silicon MacBooks use unified memory architecture, meaning RAM functions as GPU memory. A MacBook Pro M3 Max with 48 GB unified memory can run Llama 3.3 70B at reduced quantization. Windows laptops with discrete NVIDIA GPUs follow the same VRAM rules as desktops: an RTX 4060 laptop GPU with 8 GB handles 7B models; a 16 GB laptop GPU handles up to 14-27B models at 4-bit.

Is Llama 4 truly open source?

No, not under the OSI definition. Llama 4 is released under a custom Meta Llama license that permits commercial use but includes restrictions: you cannot use Llama 4 outputs to train competing LLMs, products with over 700 million monthly active users require a separate Meta license agreement, and redistribution requires including the original license file. These conditions make Llama 4 an open-weight model. Meta uses the term "open source" in its marketing, which reflects a broader AI industry usage of the term rather than strict OSI compliance. Truly open-source LLMs under Apache 2.0 with no such conditions include Qwen 3 and Mistral Small 3.1.

Which open source LLMs allow commercial use for free?

Models under Apache 2.0 or MIT licenses allow unrestricted commercial use without fees or usage caps. The most capable examples in 2026 are Qwen 3 (72B and smaller variants) by Alibaba under Apache 2.0, Mistral Small 3.1 by Mistral AI under Apache 2.0, and Phi-4 mini by Microsoft under a MIT-style license. Llama 4, Gemma 3, and DeepSeek V3 also allow commercial use but under custom open-weight licenses with specific conditions. Always verify the exact license file in the model card before commercial deployment, because licensing terms can differ between model variants within the same family.

How do open source LLMs compare to ChatGPT and Claude?

The top open-weight models in 2026 have closed much of the benchmark gap. DeepSeek V3 and Llama 4 Maverick score 88-90% on MMLU, comparable to GPT-4o. Proprietary models still hold advantages in agentic task reliability (complex multi-step tool use with fewer errors), multimodal understanding (vision, audio, video), safety alignment consistency, and instruction following in edge cases. For isolated text tasks including summarization, coding, translation, and question answering, open-weight models at the 70B+ scale are competitive in direct evaluations. The performance premium of proprietary models is most visible in complex agentic workflows and applications requiring robust multimodal reasoning.

What is the difference between open source and open weight LLMs?

Open source LLMs release model weights, training code, and training data under permissive licenses like Apache 2.0 or MIT, allowing unrestricted use, modification, and redistribution. Qwen 3 and Mistral Small 3.1 meet this standard. Open-weight LLMs release model weights for download but under custom licenses that restrict specific uses, such as training competing models, commercial use above a threshold, or redistribution without the original license. Llama 4 (Meta Llama license) and Gemma 3 (Gemma license) are open-weight. Both are free to download and commercially usable, but they are not the same under licensing law.

How much does it cost to run an open source LLM locally?

The upfront cost is GPU hardware. An RTX 4060 Ti 16 GB suitable for Phi-4 and Mistral 7B costs $380-500. An RTX 4090 24 GB for Gemma 3 27B costs $1,500-2,200. An A100 80 GB for Llama 4 Scout runs $8,000-15,000. Ongoing costs are electricity: an RTX 4090 draws approximately 450W under load, costing roughly $0.05-0.07 per hour at typical US electricity rates, or about $200-300 per year running 12 hours per day. Compare this to OpenAI or Anthropic API fees: a team generating 50 million output tokens per month at $5 per million tokens pays $250/month or $3,000 per year, making the RTX 4090 break even within 18 months for most usage patterns.

Can open source LLMs be used in healthcare, legal, or finance?

Yes, and this is one of the primary reasons enterprises adopt them over commercial APIs. Healthcare organizations can deploy open-weight models on-premise for clinical documentation, patient data summarization, and prior authorization assistance without sending protected health information to a third-party API, addressing HIPAA compliance concerns. Law firms use self-hosted LLMs for contract review and document analysis while maintaining attorney-client confidentiality. Financial institutions use them for regulatory filings and internal research without triggering data residency or third-party model risk management requirements. Gemma 3, Llama 3.3 70B, and Qwen 3 are commonly used in enterprise deployments across these sectors.

Related Articles

What Is an LLM? Large Language Models Explained

10 min read

How Does ChatGPT Work? How AI Learns, Thinks, and Generates Text

12 min read

What Is AGI? Artificial General Intelligence Explained

11 min read

AI Regulation Explained: EU AI Act, US Rules, and What They Mean

11 min read

Jobs AI Cannot Replace: 12 Careers Under 5% Risk in 2026

11 min read

Want hands-on setup guides?

These step-by-step guides relate to topics covered in this article.